Joint Investigation of OpenAI OpCo, LLC

By the Office of the Privacy Commissioner of Canada, the Commission d’accès à l’information du Québec, the Office of the Information and Privacy Commissioner for British Columbia, and the Office of the Information and Privacy Commissioner of Alberta

PIPEDA Findings #2026-002

May 6, 2026

Table of contents

OpenAI’s collection, use and disclosure of personal information

Issue 1: Did OpenAI collect, use and disclose personal information for an appropriate purpose?

Background

- This report of findings examines OpenAI OpCo, LLC’s (referred to in this report as either “OpenAI” or “the Respondent”) compliance with Canada’s Personal Information Protection and Electronic Documents Act (“PIPEDA”), Quebec’s Act Respecting the Protection of Personal Information in the Private Sector (“Quebec’s Private Sector Act”), British Columbia’s Personal Information Protection Act (“PIPA-BC”) and Alberta’s Personal Information Protection Act (“PIPA-AB”) – referred to collectively as the “Acts”.

OpenAI

- Parent company, OpenAI, Inc., is an Artificial Intelligence (“AI”)Footnote 1 research and deployment company registered in the state of Delaware in the United States. It was founded as a non-profit organization in 2015 with the stated goal of “ensuring that artificial general intelligence benefits all of humanity”.Footnote 2 It has no shareholders and is controlled by a Board of Directors.

- The Respondent, the operating company that provides OpenAI’s products to end users, is a subsidiary of OpenAI, Inc. It was also registered in Delaware, three years later, in 2018. It is headquartered in San Francisco, California, United States.

- In 2019, OpenAI, Inc. created another subsidiary, a for-profit enterprise with a “capped profit” structure, OpenAI LP, to raise the capital required to pursue the development of its technology.Footnote 3 Shortly after, OpenAI LP entered into a strategic partnership with Microsoft.Footnote 4

- On October 28, 2025, OpenAI announced the completion of the recapitalization of its for-profit enterprise, which is now a public benefit corporation, called OpenAI Group PBC. The non-profit, OpenAI, Inc., is now called OpenAI Foundation and continues to control the for-profit enterprise.Footnote 5

- According to OpenAI, as of March 31, 2025, the OpenAI group of companies was valued at US $300 billionFootnote 6. In October 2025, several media outlets reported that OpenAI had reached a US $500 billion valuation following a secondary share sale.Footnote 7 More recently, OpenAI announced US $110 billion in new investment at a US $730 billion pre-money valuation.Footnote 8

- OpenAI performs AI research and develops generative AI models. Generative AI is a subset of AI. Generative AI models can produce content such as text, audio, code, videos and images. This content is generated based on information that the user inputs, called a “prompt”, which is typically a question or short instructional text (e.g., “Write me a wedding speech delivered by a best man” or “Tell me about [a famous person]”). The prompt can also include images of what the user is looking for.

- OpenAI provides the option of either free or paid access to its models, which are used by individuals and organizations (for Enterprise, Team and Education applications).Footnote 9 Since its creation, the company has launched several products, including ChatGPT, described in more detail below (which produces text from text, images or voice prompts)Footnote 10, DALL-E (which produces images from text or image prompts)Footnote 11 and Sora (which generates videos from text instructions).Footnote 12 Our investigation focused exclusively on specific versions of ChatGPT, as detailed further in paragraph 16.

ChatGPT

- ChatGPT was released in November 2022. It is a conversation-style service that can respond to users’ prompts and create various types of content, including content as varied as articles, computer code and poems.

- ChatGPT is powered by a foundational Large Language Model (“LLM”). LLMs are extremely large, complex machine learning systems capable of routinely generating elaborate, plausible-sounding – but not necessarily factually accurate – content in response to queries on virtually any topic.

- At the time of its release, ChatGPT was powered by an LLM called GPT-3.5. In March 2023, OpenAI introduced GPT-4, which was enhanced with additional image input capabilities in September 2023. Since then, OpenAI has regularly upgraded, and released new versions of, its models.Footnote 13

OpenAI’s economic model

- OpenAI’s economic model with respect to ChatGPT is primarily based on two major business lines:

- Direct access to ChatGPT, and other products (which are outside the scope of our investigation), via a free or premium subscription, including ChatGPT Team, Edu and Enterprise versions, which offer advanced capabilities and customization options. In April 2024, OpenAI announced that users had the option to use the free version of ChatGPT without an account.Footnote 14

- An Application Programing Interface (“API”)Footnote 15 platform, which allows API customers to build applications powered by OpenAI’s models. The APIs enable these customers to integrate the capabilities of OpenAI’s AI models into their own applications, products or services, which they can then make available to their own end users and customers. OpenAI charges for API usage based on consumption (i.e., “pay for what you use” approach).Footnote 16

- According to OpenAI, as of January 2024, there were several million monthly active ChatGPT usersFootnote 17 and several hundred thousand paid subscribers (i.e., Chat GPT Plus) in Canada. Quebec, British Columbia and Alberta all had a significant user base.

Initiated complaints

- In April 2023, the Office of the Privacy Commissioner of Canada (“OPC”) launched an investigation into OpenAI in response to a complaint alleging that the organization had collected, used and disclosed the complainant’s personal information without consent.

- In May 2023, given the significant privacy impact of Generative AI and its relevance to all Canadians, the OPC decided to close the initial complaint and, along with the Commission d’accès à l’information du Québec (“CAI”), the Information and Privacy Commissioner for British Columbia (“OIPC-BC”), and the Information and Privacy Commissioner of Alberta (“OIPC-AB”), collectively referred to as “the Offices”, initiated investigations pursuant to section 11(2) of PIPEDA, section 81 of Quebec’s Private Sector Act, section 36(1)(a) of PIPA-BC, and section 36(1)(a) of PIPA-AB respectively. The Offices decided to conduct the investigations jointly in order to leverage their respective expertise and resources, while avoiding duplication of efforts for the Offices and OpenAI.

- The Offices specifically focused on ChatGPT and the underlying models that powered it at the time that the investigation was launched (i.e., GPT-3.5 and GPT-4, excluding recent releases). The Offices did not assess later models (we did, however, consider the adequacy of new measures implemented by OpenAI in response to our Preliminary Report of Investigation), or OpenAI’s other AI services (such as image or video generation). However, the findings in this report may still be relevant to them if their development and deployment process is similar to that used for GPT-3.5 and GPT-4 (e.g., models designed to mimic human conversations, using training techniques such reinforcement learning, which is discussed later in this report).

- Our scope did not extend to the unlimited potential applications of the tool by OpenAI’s clients (e.g., API customers, developers of GPTsFootnote 18, individual users).

Issues

- Our investigation sought to determine whether OpenAI:

- collected, used and/or disclosed personal information for purposes that a reasonable person would consider appropriate in the circumstances, and limited this collection to information which was necessary for these purposes;Footnote 19

- obtained valid consent for the collection, use and disclosure of the personal information of individuals based in Canada via or in relation to ChatGPT;

- fulfilled its obligations to be open and transparent;

- took reasonable steps to ensure that the information it generates about individuals is as accurate, complete, and up-to-date as is necessary for the purposes for which it is to be used;

- provided individuals with the ability to obtain access to, and correct, their personal information;

- fulfilled its obligation to establish appropriate retention and disposal procedures for the personal information that it collects, uses and discloses; and

- was accountable for the personal information under its control.

Methodology

- To conduct the investigation, the Offices considered information from a variety of sources, including:

- extensive written representations provided to the Offices by the Respondent through its legal counsel. This included descriptions of the privacy protective measures and tools implemented by OpenAI at the various phases of its models’ development and deployment, as well as the results of OpenAI’s internal evaluations of these measures and tools;

- information that the Offices gathered during interviews with several OpenAI employees, conducted at the Respondent’s headquarters in San Francisco, United States and virtually, as well as at the offices of the OPC in Gatineau, Canada;

- internal testing on Chat-GPT (versions 3.5 and 4) from the perspective of a user, which the investigative team conducted in the OPC’s technical laboratory between November 2023 and May 2024. This included testing the account creation process, examining the content and frequency of OpenAI’s notification about accuracy, using OpenAI’s export data tool, gaining a better understanding of OpenAI’s threshold for determining who is a public individual, and more generally interacting with ChatGPT to replicate the user experience; and

- information that the Offices gathered and analyzed from publicly accessible sources concerning issues relevant to the investigation (e.g., media articles, studies published by OpenAI or independent AI experts). We did not rely on these sources as evidence to support our findings but rather referenced them to illustrate certain practices and provide context, where relevant.

- Upon completion of the evidence-gathering phase of our investigation, the Offices issued a Preliminary Report of Investigation (“the Preliminary Report”), which set out the rationale for our preliminary findings, identified a number of recommendations to bring OpenAI into compliance with the Acts, and invited OpenAI to respond. We also met on various occasions with OpenAI to provide an opportunity for the company to ask any questions it may have on the Preliminary Report and discuss any perceived challenges in responding to our recommendations.

- In its final written response, OpenAI argued that it was, through a combination of existing practices and associated communications, compliant with the Acts in most respects. OpenAI also provided new information and explanations about measures that it has recently implemented in relation to the development and deployment of ChatGPT. These measures were not applied to GPT-3.5 and 4, but rather exclusively to subsequent versions of the models.

Analysis

Jurisdiction

- As indicated previously, the Respondent is incorporated in the U.S. That said, in the course of its commercial activities, OpenAI collects, uses, and discloses personal information of individuals who use ChatGPT across Canada, including of users located in the provinces of Alberta, British Columbia, and Quebec, as explained in the next section of this report.

- Nevertheless, OpenAI challenged the Offices’ jurisdiction (both in whole and in part) on the grounds that ChatGPT was not released in Canada until November 30, 2022, and that OpenAI had no establishment or employees in Canada prior to that date. OpenAI also took the position that the Acts do not apply to outputs generated and used by ChatGPT end users, where these outputs are created for personal and domestic purposes. Finally, OpenAI specifically challenged the jurisdiction of the OIPC-BC.

- The Acts under which this investigation was conducted apply to organizations that, in the course of a commercial activity, collect, use, and disclose the personal information of individuals within each province. As such, each office undertaking this investigation has determined that it has the jurisdiction to investigate and make recommendations or ordersFootnote 20 related to OpenAI’s handling of personal information within their respective jurisdiction of responsibility, whether provincial or federal.Footnote 21

- In addition, PIPEDA applies to organizations outside of Canada where a “real and substantial connection” to Canada exists.Footnote 22 In our view, the circumstances in this matter clearly demonstrate that a real and substantial connection to Canada exists. In coming to this conclusion, we considered the following factors:

- OpenAI offers its services in Canada and has monthly active ChatGPT users, including paid subscribers (i.e., ChatGPT Plus) in Canada.

- OpenAI’s Terms of Service for ChatGPT are applicable to users in Canada and address consent to the collection, use, and disclosure of personal information, and associated rights of access and correction.

- Users located in Canada can visit the OpenAI website and use ChatGPT.

- We note that OpenAI’s activities take place exclusively through a website or app. As referenced in paragraph 54 of A.T. v. Globe24h.comFootnote 23, a physical presence in Canada is not required to establish a real and substantial connection when considering websites under PIPEDA, as telecommunications occur “both here and there.”

- OpenAI’s operations necessitate the transmission and receipt of personal information between Canada and the United States, both when collecting information and disclosing it through ChatGPT.

- OpenAI has collected, used and disclosed the personal information of users in Canada, or derived from Canadian sources, to develop and deploy ChatGPT.

- Similarly, as cited in the LifeLabs Investigation ReportFootnote 24, Alberta’s Privacy Commissioner has jurisdiction to conduct compliance investigations under PIPA-AB. Furthermore, an organization that collects, uses or discloses personal information in Alberta must comply with Alberta privacy legislation, and this includes all aspects of compliance, as provided by section 36(1)(a) of PIPA-AB. If an organization collects, uses or discloses personal information in Alberta, practices throughout the organization must comply with PIPA-AB.Footnote 25 Finally, as cited in the Clearview decisionFootnote 26, Alberta’s Privacy Commissioner has jurisdiction over Clearview because Clearview chose to do business in Alberta and collects, uses, and discloses personal information of Albertans, some of which is hosted on websites with servers in Alberta.

- Like the other Offices, the CAI does not accept the argument raised by OpenAI regarding jurisdiction and endorses the reasons set out in paragraph 25 of this report.

- More specifically, section 1 of Quebec’s Private Sector Act establishes the basis for the application of the Act and the jurisdiction of the CAI. This section specifies that the purpose of Quebec’s Private Sector Act is to establish, for the exercise of the rights conferred by articles 35 to 40 of the Civil Code of QuebecFootnote 27 concerning the protection of personal information, particular rules with respect to personal information relating to other persons which a person collects, uses, or communicates to third persons in the course of carrying on an enterprise within the meaning of section 1525 of the Civil Code of QuebecFootnote 28.

- Open AI collected and used personal information concerning people in Quebec to train its GPT- 3.5 and 4 models, which is a real and important link with QuebecFootnote 29.

- Justice Abella stated in Google Inc. v. Equustek Solutions Inc. that “[t]he Internet has no borders — its natural habitat is global”. Therefore, considering the nature of OpenAI’s activities, the CAI finds that the absence of any establishment or employees in Quebec does not in itself preclude the application of Quebec’s Private Sector Act.

- The invasion of privacy that may result from the collection and use of the personal information of Canadians, and more specifically people in Quebec, occurs at the place of residence of the individuals concerned by this information, and the place of residence constitutes a sufficient connecting factor in this caseFootnote 30.

- In addition, the CAI considers that, for the operation of its business, and more specifically for the purposes of supporting the functionality of its GPT-3.5 and 4 models, OpenAI continues to hold and use personal information concerning Quebec residents.

- Ultimately, considering the nature of the products or services offered by OpenAI and the context in which the collection, use, and disclosure of personal information have been and continue to be carried out, the CAI considers that OpenAI is subject to Quebec’s Private Sector Act regarding the collection, use, and disclosure of personal information concerning Quebec residents.

OpenAI’s challenge to OIPC-BC’s jurisdiction

- In its response to the Preliminary Report, OpenAI argued that OIPC-BC and the OPC cannot both have jurisdiction over the subject matter of this investigation. In support of this argument, OpenAI raised s. 3(2)(c) of PIPA-BC, which states:

(2) This Act does not apply to the following:

… (c) the collection, use or disclosure of personal information, if the federal Act applies to the collection, use or disclosure of the personal information…Footnote 31 - This argument has been thoroughly addressed in previous joint investigations that included the OIPC-BC.Footnote 32

- Exemption Order SOR/2004-220, issued under PIPEDA, clearly exempts an organization from the application of Part 1 of PIPEDA to that organization’s collection, use, or disclosure of personal information if that activity occurs within British Columbia.Footnote 33 Therefore, as an organization, OpenAI’s collection, use, or disclosure of personal information is subject to PIPA-BC instead of Part 1 of PIPEDA to the extent that such activity occurs within British Columbia.

- Privacy regulation is a matter of concurrent jurisdiction and an exercise of cooperative federalism.Footnote 34 Cooperative federalism is a core principle of this modern division of powers, and jurisprudence reflects the concurrent operation of statutes enacted by the federal and provincial levels of government.Footnote 35 PIPA-BC is “designed to dovetail with federal laws” in its protection of quasi-constitutional privacy rights of individuals in British Columbia.Footnote 36

- The legislative history of the enactment of PIPEDA,Footnote 37 PIPA-BC,Footnote 38 and their interlocking structure support the interpretation that PIPEDA and PIPA-BC operate together seamlessly. Moreover, the Court of Appeal for British Columbia recently confirmed the OIPC-BC’s jurisdiction in the context of a joint investigation of an organization operating across provincial and international borders.Footnote 39

- This investigation entails a single organization operating across both jurisdictions with a complex collection, use, and disclosure of personal information. In our view, an interpretation of s. 3(2)(c) that removes the authority of the OIPC-BC in any circumstance where the OPC also exercises authority would be inconsistent with the current approach to privacy regulation in Canada and would frustrate the principle of cooperative federalism.

- The OIPC-BC therefore concludes that s. 3(2)(c) of PIPA-BC does not preclude the application of PIPA-BC to OpenAI’s collection, use, or disclosure of personal information in British Columbia, nor does it limit the jurisdiction of the OIPC-BC to participate in this investigation in any way.

OpenAI’s challenge to the Offices’ jurisdiction prior to the release of ChatGPT

- As mentioned above, in its response to the Preliminary Report, OpenAI made representations setting out its view that in the absence of any establishment, employees or other factors giving rise to a real and substantial connection to Canada prior to the release of ChatGPT on November 30, 2022, the Offices cannot have jurisdiction over, and the Acts cannot apply to, OpenAI’s activities before that date.

- After carefully considering these arguments, we disagree with OpenAI’s assertion and find that several factors confirm that there was a real and substantial connection to Canada prior to November 30, 2022. Specifically:

- As acknowledged by OpenAI, the development of ChatGPT prior to its launch involved and relied, in part, on the collection of the personal information of individuals in Canada or derived from Canadian sources (e.g., Canadian-based online platforms). This development, which took place well in advance of the release of ChatGPT in Canada is inextricably connected to the commercial activity of deploying this service.

- OpenAI has retained the datasets including this personal information and continues to use them for the purpose of training its AI models.

The use of ChatGPT for personal or domestic purposes

- OpenAI represented that “the Acts do not apply to outputs generated and used by ChatGPT end users where such outputs are created for personal or domestic purposes.” The Offices acknowledge that some of the Acts contain exemptions related to collection, use, or disclosure of personal information for personal or domestic purposes.Footnote 40 However, these provisions operate to exempt “individuals” engaged in personal or domestic use, not “organizations” engaged in commercial activities. Furthermore, for these exemptions to apply, the purpose of the collection, use and disclosure must be exclusively personal or domestic.Footnote 41 As is clear from paragraphs 12 to 13 of this report, OpenAI’s operation of ChatGPT cannot be credibly characterized as exclusively personal or domestic.

The Objectives of the ActsFootnote 42

- Generative AI applications such as ChatGPT have the potential to implicate not only privacy rights but also the right to freedom of expression, which is protected under s. 2(b) of the Canadian Charter of Rights and Freedoms (“the Charter”). Subject to limited exceptions, this Charter right can apply to any activity that “conveys or attempts to convey meaning”.Footnote 43 In addition to potentially involving personal information, ChatGPT prompts and generated outputs may be an example of expressive content. Accordingly, any limitations on the operation of ChatGPT may in turn place limits on the Charter-protected right to freedom of expression, including, for example, as it relates to ChatGPT users.

- In its submissions to the Offices, OpenAI highlighted that ChatGPT can promote education, research, creativity and innovation. OpenAI further submitted that the Offices’ analysis should be informed by Charter values, which the OPC, OIPC-BC and OIPC-AB do not disagree with. In addition to balancing the needs of organizations and individual privacy rights consistent with the statutory purposes of some of the Acts,Footnote 44 the forthcoming analysis is also informed by the Charter values of freedom of expression and privacy. In this regard, the OPC, OIPC-BC and OIPC-AB have adopted the approach taken by the OPC in its recent Report of Findings related to Google search results.Footnote 45 In that report, drawing on Supreme Court of Canada jurisprudence, the OPC noted that when Charter protections are engaged, statutory decision-makers are required to balance the statutory objectives of the legislation they oversee with relevant Charter protections (in particular freedom of expression and privacy).Footnote 46 In this case, the Offices’ assessment of appropriate purposes under the Acts (issue 1) has been informed by Charter values.

- In addition to Charter values, consistent with the Federal Court of Appeal decision in Englander v. TELUS Communications Inc., the OPC, OIPC-BC and OIPC-AB interpret their respective acts with “flexibility, common sense, and pragmatism”.Footnote 47 This is consistent with the modern approach to statutory interpretation, which states that “the words of an Act are to be read in their entire context and in their grammatical and ordinary sense harmoniously with the scheme of the Act, the object of the Act, and the intention of Parliament”.Footnote 48 The Supreme Court’s recent decision in Telus Communications Inc. v. Federation of Canadian Municipalities clarified that the modern approach to statutory interpretation entails interpreting legislation in a “dynamic” manner, as “capable of applying to new circumstances including new technology … consistent with the legislature’s purpose.”Footnote 49 Finally, the Courts have also recognized PIPEDA as quasi-constitutional legislation and “part of an international movement towards giving individuals better control over their personal information” which is “intimately connected to their individual autonomy, dignity and privacy.” Courts have further acknowledged the fundamental role that privacy plays in the preservation of a free and democratic society.Footnote 50

Technical background

Model training:

- The operation of an LLM relies on a large number of numerical “weights”, which represent the statistical relationship between different words (or portions of words, which are converted to numerical strings called “tokens”) in different contexts.Footnote 51 These weights are determined based on the LLM’s processing of training data and may be updated as the LLM is subject to further training.

- To train the GPT-3.5 and GPT-4 models underlying ChatGPT, OpenAI employed a two-stage process, described below in simplified terms:Footnote 52

- Pre-training (also called “self-supervised learning”):

- During this initial phase, the model gains a general understandingFootnote 53 of language (or more technically, a general functionality in natural language processing) by analyzing vast amounts of unstructured and tokenized text data, which may include personal information.

- As discussed further in paragraph 51, pre-training datasets are generally comprised of (i) information collected from publicly accessible websitesFootnote 54 (noting that OpenAI removes certain limited categories of websites, redundant content, and content that violates its policies) and (ii) data licensed from third parties. In response to our Preliminary Report, OpenAI represented that for both (i) and (ii), it now also uses a tool to detect and mask identifying information about private individuals from pre-training data (see below).

- Based on this pre-training data, the model builds a representation of the general statistical relationship between tokens, and from this, learns to continuously predict the next token in a sentence.

- Fine-tuning (or Alignment): this phase seeks to further improve the model’s performance on specific tasks and domains (e.g., translation, summarization, conversation) by refining its behaviour and the statistical correlations it establishes between tokens. This helps improve the model’s ability to answer questions in a way that people find useful, as well as prevent the model from returning a response that may be used in harmful ways (e.g., a response that would constitute hate speech, or one that would contain personal information of a private individual). Fine-tuning involves the use of a subset of data that is gathered through individuals’ interactions with ChatGPT, as well as information provided by human trainers (see paragraph 51). Fine-tuning is further divided into substages, including:

- Supervised learning – the model is trained on examples of “ideal” behaviour that is written by human trainers to demonstrate the type of responses that the model should provide in response to various prompts it might receive.

- Reinforcement learning from human feedback (“RLHF”) – the model is “rewarded” if it responds ethically and appropriately to users’ prompts (i.e., with relevant, safe, accurate, unbiased responses) and receives less reinforcement or a “lower reward” if it does not. Concretely, human trainers review multiple LLM responses to the same prompt and rank them in order of most to least appropriate or ethical. As the model “learns” from this feedback, the statistical weights between words are updated.Footnote 55

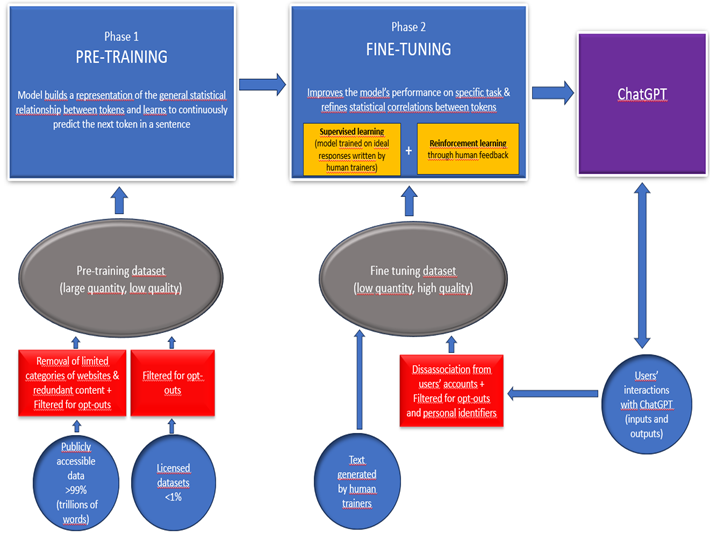

Figure 1 – Model training (GPT-3.5 and 4) Text version of Figure 1

Figure 1. Explains the different phases of ChatGPT’s training

Phase 1: PRE-TRAINING: Model builds a representation of the general statistical relationship between tokens and learns to continuously predict the next token in a sentence.

The pre-training dataset consists of publicly accessible data (more than 99% of the dataset, trillions of words), from which OpenAI removes limited categories of websites and redundant content, and licensed datasets (less than 1% of the dataset). OpenAI filters content for opt-outs.

Phase 2: FINE-TUNING: Improves the model’s performance on specific tasks and refines statistical correlations between tokens, through supervised learning and reinforcement learning.

Fine-tuning datasets consist of text generated by human trainers and users’ interactions with ChatGPT (inputs and outputs), which are disassociated from users’ accounts and filtered for opt-outs and personal identifiers.

- Pre-training (also called “self-supervised learning”):

ChatGPT’s text generation:

- The following is a high-level description of the technical process by which ChatGPT generates a response to a user’s prompt:

- ChatGPT receives text input from users in the form of a “prompt” (defined in paragraph 7).

- The LLM (e.g., GPT-3.5 or ChatGPT-4) breaks down the text input into “tokens”.

- To create a response, the input tokens are run through the LLM, which generates a first output token (often, but not always, the statistically most likely). Subsequent output tokens are generated by the LLM, taking into account both the input tokens and the already generated output tokens.

- The series of tokens generated by the LLM is then converted back into human-readable text, which is provided as the output or response to the user’s prompt.

OpenAI’s collection, use and disclosure of personal information

- Citing security, confidentiality and operational considerations, OpenAI did not grant our request to access and examine its systems. However, in its representations to the Offices, OpenAI acknowledged that, in the course of its activities, it collects, uses and discloses personal information. The Offices therefore consider that this acknowledgement constitutes evidence to substantiate that the information collected, used and disclosed by OpenAI contains personal information within the meaning of the Acts.

- Specifically, OpenAI represented that in order to develop or train its models and facilitate users’ interactions with ChatGPT, it collects data from four primary sources of information. Each source may include personal information:Footnote 56

- Information from “publicly available Internet sources,” which, according to OpenAI, currently represents the vast majority of its training datasets.Footnote 57 OpenAI collects this information either:

- from third parties such as Common CrawlFootnote 58 or Wikipedia, which have already gathered and made that information available. OpenAI represented that it does not circumvent paywalls or account-protected websites when collecting this data; or

- via its GPTBot. This web crawling tool crawls and scrapes the content of websites across the Internet. Website owners can, however, choose to restrict or limit GPTBot access.Footnote 59

- Information that it licenses from third parties, including from various media outlets, a large stock image vendor, and other sources of specialized knowledge.Footnote 60 OpenAI represented that it partners with these content providers to ensure the inclusion of high-quality content in its training datasets, including on specialized topics such as science and mathematics.

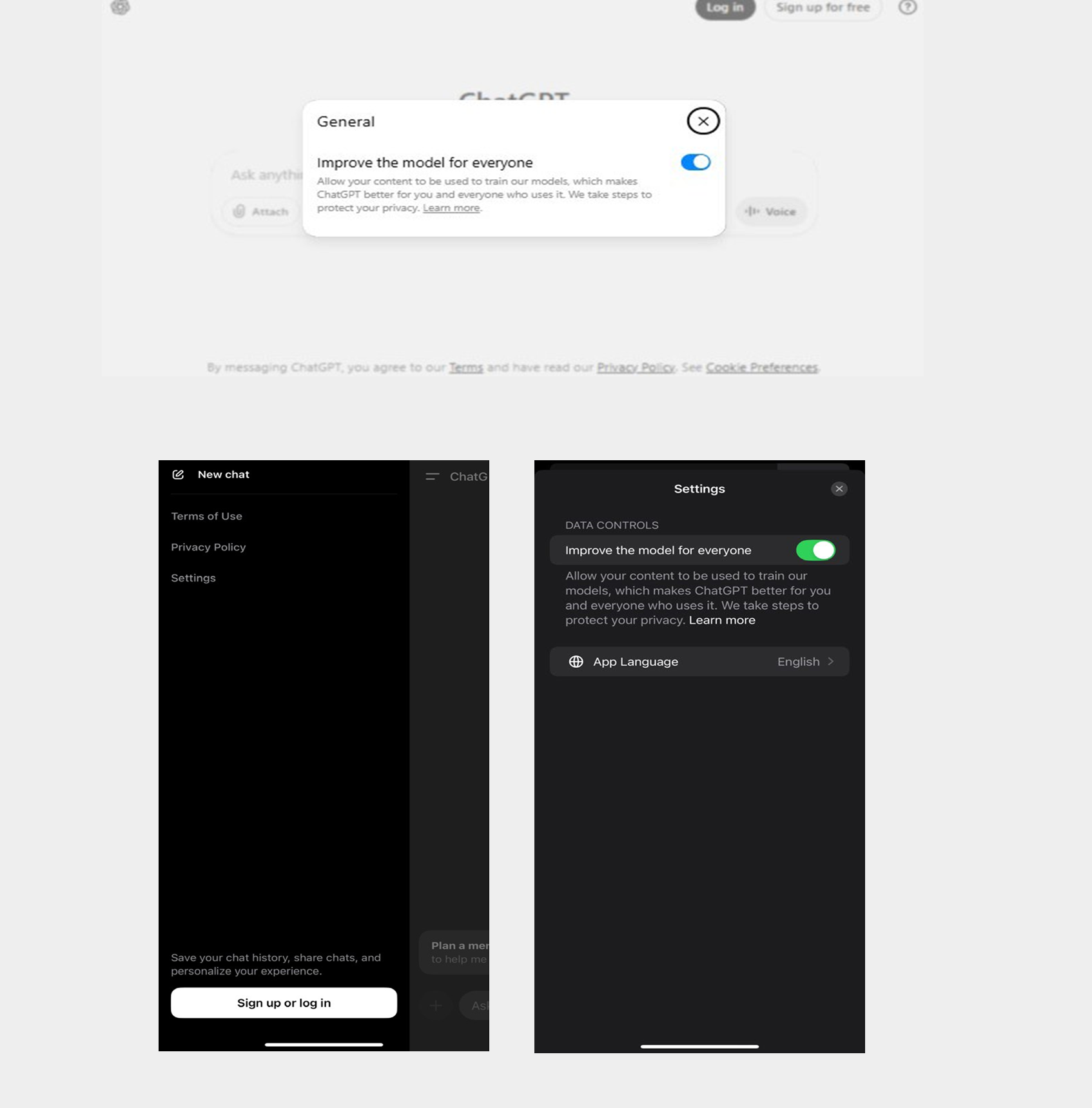

- User interactions with ChatGPT (i.e., model input and output, image and file uploads, feedback provided by the user to OpenAI regarding whether ChatGPT’s response was helpful). Users can choose not to have this data used for model training, as further explained in paragraph 303.Footnote 61

- Conversations generated by human AI trainers (both OpenAI’s employees and contractors). As discussed in paragraph 48, these trainers create conversations by writing queries and ideal responses to fine-tune the model. They also evaluate and rank different model-generated responses based on their “quality, safety, and relevance”.

- Information from “publicly available Internet sources,” which, according to OpenAI, currently represents the vast majority of its training datasets.Footnote 57 OpenAI collects this information either:

- OpenAI stated that the inclusion of personal information in its training datasets is incidental to the broader goal of obtaining a large and varied body of text necessary to effectively train its models. It further submitted that it has mitigation measures in place to limit, to the extent possible, the presence of personal information in the training datasets and model outputs and to minimize associated privacy risks. In response to our Preliminary Report, OpenAI also represented that it continually researches and develops safety improvements and iterates on technical and process enhancements for training AI models. This includes privacy-enhancing techniques that reduce the processing of personal information by detecting and filtering personal identifiers in training datasets, thereby enabling models to learn about language and develop intelligence without learning from the specific masked information (these various mitigation measures are described below in relevant sections of the report). At the same time, OpenAI stated that it is not feasible, nor desirable, to completely remove personal information from training text, as models need to learn how such information fits into a sentence, to be able to respond to users’ prompts.

- We do not accept OpenAI’s assertion that its collection of personal information is merely “incidental”. Rather, we find that OpenAI collects significant amounts of personal information for the purpose of training its AI models. We note that this is consistent with findings made by some other data protection authorities around the world.Footnote 62 That said, we accept that OpenAI does not exclusively target personal information when collecting information for the purpose of building its training datasets.

- Based on all of the above, we conclude that OpenAI collects, uses and discloses personal information via and in relation to ChatGPT.

Purposes for collection, use and disclosure

- For each of the above categories of information, OpenAI identified specific purposes for its collection and processing, which are listed in the table below. However, taken together, we consider OpenAI’s collection, use and disclosure of this personal information to be for the purpose of developing, implementing, continuing to advance and operating ChatGPT (hereinafter referred to as “development and deployment”).

Category of information Main purposes of collection and processing (as identified by OpenAI) User interaction data To provide, administer, maintain, and/or analyze OpenAI’s services

To improve OpenAI’s service, develop new services and conduct research (unless a user has opted out)Footnote 63

To carry out business transfers (i.e., user interaction data may be analyzed to demonstrate the performance, utility and usage patterns of OpenAI’s products and services in the event of a business transfer)Footnote 64Information collected from publicly accessible Internet sources

Information which it licenses from third parties

Conversations generated by human AI trainersTo train AI models, which may include to provide or improve OpenAI’s products and services and to develop new programs and services. - Finally, though OpenAI does use personal information for research purposes, it has not claimed, and we have no evidence to suggest, that its collection of personal information from publicly accessible Internet sources and licensed datasets is carried out solely for research purposes.Footnote 65 We accept OpenAI’s position that while reliance on the research consent exception requires a case-by-case assessment of the specific context, nature of the processing and applicable statutory conditions, OpenAI may, in appropriate circumstances, be able to rely on that exception where those conditions are met.

Issue 1: Did OpenAI collect, use and disclose personal information for an appropriate purpose?

- As explained below, we accept that OpenAI’s purposes for developing and deploying ChatGPT, as listed in paragraph 55 above, are appropriateFootnote 66. We also accept that OpenAI’s practices with respect to personal information collected directly from users via their interactions with ChatGPT are necessary and proportional. However, we find that the manner in which OpenAI initially collected personal information from Internet sources and third parties for the purpose of training its GPT-3.5 and 4 models, as well as the scale and nature of the personal information collected and used from those sources, was overbroad and therefore inappropriate, in contravention of the Acts.

- The OPC’s Guidance on inappropriate data practices: Interpretation and application of subsection 5(3) provides that, in interpreting and applying subsection 5(3) of PIPEDA, the OPC considers certain factors set out by the courts,Footnote 67 meant to assist in determining whether a reasonable person would find that an organization’s collection, use, and disclosure of personal information is for an appropriate purpose in the circumstances. As noted above, these factors are to be applied in a contextual manner, which suggests flexibility and variability in accordance with the circumstances.Footnote 68 In applying subsection 5(3), the courts have determined that the OPC is required to engage in a balancing between the individual’s right to privacy and the commercial needs of the organization concerned.Footnote 69 This balancing must be “viewed through the eyes of a reasonable person.”Footnote 70 More recent jurisprudence has reaffirmed that PIPEDA does not require a balance between competing rights, but rather, between an individual’s right to privacy and an organization’s need to collect personal information.Footnote 71

- Both PIPA-AB and PIPA-BC provide that an organization may collect, use, or disclose personal information only for purposes that a reasonable person would consider appropriate in the circumstances.Footnote 72 Orders issued by the OIPC-AB have also identified a number of questions for determining whether the collection of personal information in an instance was for a reasonable purpose, including whether a legitimate issue exists to be addressed through the collection of personal information.Footnote 73 OIPC-BC also considers factors similar to those considered by the OIPC-AB in determining whether the purpose is reasonable in the circumstances.Footnote 74

- In order to determine whether the purposes for which the personal information was collected by the company are serious and legitimate within the meaning of section 4 of Quebec’s Private Sector Act, the CAI takes into account the lawfulness of the purpose and its compliance with the principles of law, justice and fairness.Footnote 75 More specifically, section 4 of Quebec’s Private Sector Act requires a company that collects personal information to determine the purposes for the collection before engaging in that collection. In addition, the necessity test developed under section 5 of Quebec’s Private Sector Act requires that the purposes justifying the collection be legitimate, important and real, and that the resulting invasion of privacy be proportional to the importance of those purposes. The burden of establishing the seriousness and legitimacy of the purposes and the necessity of collecting the personal information rests with the company collecting the information.

- The principles of appropriate purposes, necessity and proportionality are also discussed and described in the Principles for responsible, trustworthy and privacy-protective generative AI technologies (“Generative AI Principles”), adopted by the federal, provincial and territorial privacy authorities on December 7, 2023.Footnote 76

- In the following sections, we evaluate OpenAI’s purposes for developing and deploying ChatGPT, taking into consideration the various factors and questions mentioned above.

Sensitivity of the personal information

- As further detailed in paragraphs 133 and 296, based on the limited privacy-protective measures in place at the time of developing its GPT-3.5 and GPT-4 models (in particular at the data collection and pre-training stages), we find that OpenAI’s training datasets necessarily included significant amounts of personal information of varying levels of sensitivity, such as medical information, individuals’ opinions on sensitive or controversial topics including about other individuals, and information relating to children.

Legitimate need or issue

- OpenAI’s stated intention for developing and deploying ChatGPT is to “provide benefits to humanity”, such as assistance with individuals’ everyday tasks, conducting scientific research or inspiring creativity. We accept that this purpose represents a legitimate need or issue for OpenAI.Footnote 77

- More generally, we appreciate that there are many potentially beneficial applications for the implementation of safe, trustworthy and privacy-protective generative AI technologies, and LLMs in particular in today’s society. Indeed, many research publications and media reports have commented on the concrete benefits of generative AI. For example, the Organisation for Economic Co-operation and Development (OECD) highlighted potential benefits in relation to language translation and interpretation, coding and content creation, and even healthcare.Footnote 78 The New York Times described “35 ways real people are using A.I. right now,”Footnote 79 including to write a speech, learn languages or write Excel formulas. Other articles emphasized AI’s benefits for business (e.g., ability to enhance efficiency and productivity, improve customer experience or optimize business operations)Footnote 80 or its potential to accelerate and improve research, resulting in groundbreaking ideas that push the limits of current possibilities.Footnote 81

- We also recognize that there are current and potential future risks associated with the use of LLMs that are released without adequate protections. These risks include the potential for the models to disseminate false information (particularly when used to make or support non-automated decisions about an individual), harm individuals’ privacy and reputation (including youth and other vulnerable groups), or assist malicious actors in conducting cybersecurity attacks.

- As described further below (see paragraphs 128, 294, and 378), OpenAI has explained to the Offices the risk mitigation measures that it has implemented at various phases of its AI models’ development and deployment, including to protect against inappropriate, unauthorized uses of ChatGPT, and to mitigate the risks outlined in the above paragraph.

- While, as explained in paragraph 17, the scope of this investigation did not include consideration of all the possible ways in which its clients (e.g., API customers, developers of GPTs, individual users) may use ChatGPT, we strongly encourage OpenAI to ensure that it has robust measures in place to ensure that ChatGPT is not used in violation of OpenAI’s Usage policies or for otherwise inappropriate purposes. In particular, we encourage OpenAI to ensure that ChatGPT is not used for purposes that fall under the No-Go Zones identified in the OPC’s Guidance on inappropriate data practices: Interpretation and application of subsection 5(3).Footnote 82

Determination of the legitimate, real and important nature of the purposes, under Quebec’s Private Sector Act

- As required under Quebec’s Private Sector Act, the CAI examined the specific purposes identified by OpenAI (see paragraph 55), to determine if they are legitimate, real and important.

Are the company’s purposes for collecting personal information from public sources accessible on the Internet legitimate, real and important?

- OpenAI stated to the Offices that its purpose for collecting information from public sources accessible on the Internet, which includes personal information, is to train its AI models – this training includes testing its products and services and developing new programs and services.

- In this regard, the CAI considers that the collection of personal information to train the ChatGPT AI model, which involves making a chatbot available to the public, testing this model and developing new models, can be considered to be for a legitimate purpose.

- As to whether OpenAI’s purposes are real and important, the CAI acknowledges that training an AI model requires a significant quantity of data. The CAI also recognizes that mass collection from publicly accessible sources, particularly through web scraping, may result in the collection of personal information without such information having been specifically targeted for collection.

- However, as explained above, the Offices do not accept OpenAI’s assertion that its collection of personal information is merely incidental to the collection of vast amounts of training data accessible on the web and rather considers that a significant volume of personal information is collected.

- Despite this consideration, and subject to the question of the sufficiency of OpenAI’s privacy risk mitigation measures (to minimize the inclusion of personal information and in particular sensitive information), which will be addressed later in this report, the CAI nonetheless accepts OpenAI’s argument that it is necessary for its AI model to learn how personal information fits into a sentence so that it can respond to various user prompts. The CAI further accepts that to accomplish this, the model must have been trained based on a significant quantity of relevant scenarios.

- The CAI is also aware of and stresses the importance of ensuring that AI models of this kind are sufficiently tested before being made available to the public. Properly training, evaluating and testing models is indeed key to ensuring that they are sufficiently accurate, coherent, fair and safe to use. For example, OpenAI has represented that its models must learn and understand how different types of personal information fit into language, so that it can respond to user prompts in a way that respects OpenAI’s policies, including rejecting requests for private or sensitive information about individuals. In this regard, the CAI considers that training the ChatGPT model, testing it and developing new programs and services related to the overall objective of making a chatbot available to the public are for real and important purposes.

- Finally, considering that OpenAI’s overall purpose of developing and deploying ChatGPT, can serve the public interest, the CAI finds (again, subject to the question of mitigation, which will be addressed later) that it is useful and important that the models have access to multiple examples of texts that may contain personal information, to learn how such information fits into the structure of sentences, for the purposes of being able to respond to user prompts correctly and efficiently.

Are the company’s purposes for collecting personal information relating to users’ interactions with ChatGPT legitimate, real and important?

- When it comes to the collection of personal information from users’ interactions, the CAI accepts that it is legitimate, real and important for OpenAI to want to provide, administer, maintain, analyze and test services that are related to providing a chatbot service.

- Similarly, the CAI considers that the research and development purposes alleged by the company are legitimate, real and important, provided that this research and development is linked to the overall objective of making a chatbot available to the public.

Effectiveness

- Based on our testing and open-source researchFootnote 83, the Offices accept that LLMs such as ChatGPT are generally effective at generating and simulating natural language and performing other natural language processing tasks, such as text summarization or language translation.

- However, as explained in the Accuracy section below (Issue 4), we find that at the time of developing and deploying GPT-3.5 and 4, OpenAI did not comply with the accuracy requirements under the Acts.

Less-privacy invasive means and proportionality

- As explained above, we accept that: (i) OpenAI’s general purpose for collecting, using and disclosing personal information – i.e., to develop and deploy its LLMs – represents a legitimate need; and (ii) that ChatGPT is generally effective at generating natural conversational language, subject to the accuracy concerns identified in the above paragraphs. However, we must also consider whether OpenAI could have developed and deployed its GPT-3.5 and 4 models through less privacy-invasive means and whether the harms to privacy resulting from OpenAI’s practices were proportional to the potential benefits of these LLMs.

- To this end, we examine OpenAI’s collection, use and disclosure of personal information from both: (i) publicly accessible websites and licensed datasets; and (ii) users’ interactions with ChatGPT.

Collection, use and disclosure of personal information from publicly accessible websites and licensed datasets

- As explained below, we find that when developing and deploying its GPT-3.5 and 4 models, OpenAI did not appropriately minimize the invasion of privacy resulting from its collection, use and disclosure of personal information, as required under the Acts. We also find that the harms to privacy resulting from this development and deployment were not proportional to the potential benefits of ChatGPT. While we acknowledge that OpenAI may have faced technological hurdles in attempting to train its GPT-3.5 and 4 models in a less privacy-invasive manner, we note that in response to our Preliminary Report, OpenAI represented that it has recently implemented new mitigation measures that significantly reduce the privacy risks associated with the development of Generative AI models. This demonstrates that, with innovation and forethought, less privacy-invasive means of training GPT-3.5 and 4, at a comparable cost and with comparable effectiveness, would have been available.

- As discussed further at paragraph 127 and following, while the exact size of OpenAI’s training datasets has not been made public, OpenAI represented that it includes trillions of words. Furthermore, our investigation revealed that, while OpenAI does not exclusively seek to collect personal information when collecting publicly accessible data online, this immense dataset includes significant amounts of personal information of varying levels of sensitivity.

- OpenAI asserted that the nature of its processing is not intrusive given that it relies on unstructured datasets that are not indexed or organized by reference to an identifier, uses tokenization (i.e., raw text is transformed into numerical representations and is therefore not used in its original format) and does not process personal information in a targeted manner (i.e., to gain specific knowledge about private individuals or generate profiles). OpenAI further stated that the processing aims at using personal information to teach AI models the concept and meaning of personal information in a general manner.

- That said, OpenAI also acknowledged, and we agree, that there are risks associated with this practice, including in relation to privacy. With this in mind, the company explained that it has implemented measures to decrease the presence of personal information in the datasets.

- In particular, OpenAI submitted that when collecting training text for GPT-3Footnote 84, it took steps to avoid pirated content and to remove duplicative and/or harmful content (e.g., Child Sexual Abuse Material, hate speech, erotic content, spam) in the training text. For the training of GPT-4 onwards, OpenAI explained that it took additional steps to identify and remove certain sites that were designed as an index or collection of personal information from the training text.Footnote 85 Finally, OpenAI stated that it does not circumvent paywalls or account-protected websites or obtain information from the dark web.

- OpenAI represented that it only removes a small portion of website categories from the data it includes in its training datasets. It also confirmed that it does not screen out social media websites, websites aimed at children, or websites that may contain sensitive information regarding other vulnerable groups. Further, given that OpenAI only removes limited website categories from training data, information from discussion forums would likely be included in that dataset.

- Data from sources such as social media and discussion forums contain vast amounts of personal information (including that of children), some of which will be sensitive, and much of which will reflect the subjective and potentially inaccurate views and opinions of the individuals who post this information.

- OpenAI explained to the Offices that the collection of data from publicly accessible, non-gated websites is necessary to teach its models about language, and that the goal in showing the model this content is to teach the model how such content is expressed, not to endorse its truth. More specifically, OpenAI stated that in order to develop highly capable general-purpose AI models (“GPAI models”), the models need to be trained on large and diverse datasets, which necessarily includes informal, real-world exchanges. These allow models to learn how language is used organically in interactions between individuals, particularly in informal, spontaneous contexts outside of structured or edited writing, such as casual conversations and everyday exchanges.

- Moreover, OpenAI submitted that complete anonymization of training data for the development of GPAI models remains technically impossible as it would compromise the effectiveness of the models and their ability to serve broadly beneficial societal purposes. OpenAI further represented that despite efforts to employ and expand the use of innovative privacy protecting measures (such as synthetic data), the current state-of-the-art does not offer less intrusive means for developing highly capable AI models.Footnote 86

- In response to these representations, the Offices acknowledge that there may be benefits in collecting personal information in this context and that a large and varied body of training text may assist ChatGPT in understanding and responding effectively to users’ queries.

- However, we do not accept OpenAI’s assertion that there were no less privacy-protective ways to develop GPT-3.5 and 4 at the same (or comparable) cost and with the same effectiveness. Indeed, as explained below, OpenAI represented in response to our Preliminary Report that it has recently implemented new mitigation measures that significantly reduce the privacy risks associated with the development of Generative AI models. In our view, this demonstrates that, with innovation, less privacy-invasive means of training GPT-3.5 and 4 would have been available.

- We find that, absent these mitigation measures, OpenAI’s development and deployment of GPT-3.5 and 4 resulted in a privacy-invasive collection of significant amounts of personal information, which necessarily increased the risk of privacy harms, such as those resulting from inadvertent disclosure of private information in model outputs, breaches of training data and more generally, individuals’ loss of control over their personal information. Furthermore, learning about such privacy risks or incidents may have negatively impacted individuals’ willingness to engage openly in digital society.

- Finally, the fact that OpenAI’s training datasets included personal information contained in sources such as social media and discussion forums—which may often be inaccurate, for example where they include opinions that are not rooted in fact and/or are biased— would also have amplified the risks of inaccurate personal information appearing in model outputs.

- OpenAI submitted that there is no concrete evidence of a systemic issue of inaccurate personal information appearing in ChatGPT’s outputs. The company further indicated that it takes concrete steps to improve accuracy and mitigate privacy risks in model outputs, such as by training its models to refuse to provide private or sensitive information, even if it is publicly accessible (for more detail, see Issue 4 – Accuracy).

- With respect to the potential harms that could result from the disclosure of personal information via ChatGPT responses, OpenAI represented that personal information appearing in its model outputs would most likely be included because it is widely accessible on the Internet (as opposed to, for example, being found in a single source).

- While we accept that this may mitigate, to a certain extent, the risk that personal information occasionally posted on the Internet may be disclosed by ChatGPT, the fact that personal information is widely accessible on the Internet does not mean that it is necessarily accurate and unbiased. This is especially true at a time when misinformation and disinformation can spread across the Internet at an unprecedented speed. More importantly, as further explained in Issue 2, the fact that personal information is accessible does not represent a carte blanche to collect and use it without limits.

- Therefore, we find that OpenAI’s mitigation measures in place at the time of training its GPT-3.5 and 4 models were not sufficient to limit the scope of its collection, use and disclosure of personal information to that which was necessary and proportional to effectively train its models.Footnote 87 For these reasons, we find that at that time, the benefits of that practice did not outweigh the risks of privacy harms.Footnote 88 Footnote 89

Collection, use and disclosure of personal information included in users’ interactions with ChatGPT

- As a preliminary matter, the Offices recognize that OpenAI’s collection of user interactions is necessary to efficiently respond to user queries. Therefore, this section focuses on OpenAI’s use and potential disclosure of personal information included in these user interactions for the purpose of developing and deploying its AI models.

- We acknowledge that the use of a certain level of user interaction data may be beneficial and necessary to properly train the models, especially during the fine-tuning phase. As mentioned in paragraph 48, OpenAI explained that fine-tuning involves (among other steps) the use of a subset of users’ interactions to improve the model’s ability to answer user queries in a way that people find useful, that is, in a more relevant, safe, accurate, and unbiased way.

- Furthermore, OpenAI represented that, when training its GPT-3.5 and 4 models, it put in place certain measures to mitigate the risks associated with utilizing users’ interactions for training purposes. As explained in more detail at paragraph 294, these measures included disassociating interactions from user accounts, using a third party filtering tool to remove personal identifiers, allowing users who have an account to choose whether their interactions with ChatGPT would be used for model training, and informing users (albeit not adequately, as discussed at paragraph 293) not to include sensitive information in their interactions with the tool. The company also instructed human model trainers to exclude from the fine-tuning datasets any information that could constitute personal information. Finally, OpenAI explained that it only used a small subset of the user interactions it collected to train its models.

- As we note in paragraph 296 of this report, the third-party filtering tool that OpenAI used at the time of training GPT-3.5 and 4 removed only a subset of the information that would constitute personal information as defined under the Acts, such that sensitive information, like opinions, could still be included in the user interaction data used for training (and potentially disclosed in model outputs). However, we accept that the combination of the various measures outlined above significantly mitigated the risk of privacy harm associated with training the model using personal information included in user interactions.

- In light of the necessity to train the model using user interactions, and of the associated benefits highlighted above (i.e., to provide more effective and efficient responses), we accept that the benefits of this practice were proportional to the residual risk of privacy harm, taking into consideration the mitigation measures implemented by OpenAI.

Findings related to GPT-3.5 and 4

- As mentioned above, we find that the nature and scale of OpenAI’s collection and use of personal information from publicly accessible websites and licensed datasets, at the time of training its GPT-3.5 and 4 models, was overbroad and therefore not necessary and proportional. Consequently, we findFootnote 90 that OpenAI contravened subsection 5(3) of PIPEDA, sections 2, 11, 14 and 17 of PIPA-BC, sections 11, 16 and 19 of PIPA-AB and section 5 of Quebec’s Private Sector Act.

- Furthermore, we accept that the collection, use and disclosure of personal information from users’ interactions with ChatGPT were effective in advancing OpenAI’s legitimate need to develop and deploy ChatGPT – in particular, to improve model outputs in responses to user prompts – and that the benefits of this practice were proportional to the residual risk of privacy harm, taking into consideration the mitigation measures implemented by OpenAI. Consequently, we find this aspect of the complaint to be not-well founded.

Recent developments and conclusion under PIPEDA

- In its response to the Offices’ Preliminary Report, OpenAI emphasized that it was not aware of a case where Canadian privacy commissioners ruled against an organization regarding the processing of publicly accessible information, where the purpose of collecting the information was not also found to be inappropriate. We would highlight the case of RateMDs, where the OPC has held that certain data practices can be inappropriate within the meaning of subsection 5(3) of PIPEDA even if the overarching purpose is not itself inappropriate.Footnote 91 Furthermore, the jurisprudence on subsection 5(3) of PIPEDA emphasizes the importance of a contextual, case-by-case assessment, rather than one that is focused exclusively on the overarching purpose for the information.Footnote 92

- In response to our Preliminary Report, OpenAI also informed the Offices that it has recently developed a tool that can detect and mask identifying information about private individuals in publicly accessible Internet data and in licensed datasets used to pre-train OpenAI’s models. OpenAI further explained that it now also uses this tool (in lieu of the previous third-party filtering tool) to redact personal identifiers from users’ interactions used to fine-tune the models.

- According to OpenAI, this new tool can identify a wide range of personal information about private individuals in the training datasets (e.g., names, phone numbers) and mask it prior to it being used for training, so the models do not learn from it. OpenAI indicated that the tool can also detect other categories of personal information that are similarly private or personal but which it has never been trained to recognize. Accordingly, to the extent that a broader range of personal information, such as an individual’s opinions or characteristics, are included in the datasets, the tool can detect and redact identifiers which would link such information to an identifiable individual.

- OpenAI further stated that the tool uses context to detect whether information is private or personal in nature and should be masked. More specifically, the company noted that the tool can distinguish between personal information about private individuals, personal information about public figures, and information about fictional characters. As a result, OpenAI stated that it is able to mask personal information about private individuals, as well as to determine when to mask private information about public figures (e.g., their personal address or personal phone number) and when to maintain information about such public figures which may be of interest to the public (e.g., their business address or business phone number). Finally, OpenAI provided the Offices with the results of recent internal evaluations showing the effectiveness of the tool at detecting different types of personal information.

- More specifically, OpenAI submitted that it conducted evaluations against other filtering tools using the open-source “PII Masking 300k benchmark”.Footnote 93 OpenAI explained that when fine-tuned on a small subset of the benchmark, its new filtering tool reached 98–99% recall (i.e., the proportion of true instances of personal information that a system correctly identifies) with a 3–6% false positive rate (i.e., proportion of instances of text incorrectly flagged as personal information by the system). Furthermore, OpenAI stated that it ran additional evaluations using 80,000 synthetic chat excerpts labeled by professional data annotators. Comparing the tool’s predictions with these human labels, OpenAI indicated that it found substantial alignment with human judgment, far surpassing the third-party filtering tool it previously used.Footnote 94

- The OPC accepts that this new tool – combined with OpenAI’s other mitigation measures in place at the various stages of development and deployment of ChatGPTFootnote 95 – can significantly reduce the risk that the personal information of private individuals, and sensitive information more specifically, will be included in the datasets used to train OpenAI’s future models. Similarly, the OPC accepts that this will also reduce the risk of such information being disclosed in model outputs.

- In making this determination, the OPC also considered the additional transparency measures that OpenAI has committed to implementing. In particular, as further discussed in other sections of this reportFootnote 96, OpenAI has agreed to publish a Canadian blog post on its website explaining its privacy practices and take measures to promote the post and its contents in the Canadian media. The blog post will inform individuals that user interactions may be reviewed and used to train its models, advise users not to share sensitive information via their interactions with ChatGPT, and provide information about the categories of content used to train its models. We find that these transparency measures will enhance public awareness of OpenAI’s privacy practices, thereby further limiting individuals’ sharing, and OpenAI’s collection of, sensitive information.

- Finally, OpenAI informed the Offices that it has deprecated (i.e., retired) its GPT-3.5 and 4 models and confirmed that the new mitigation measures, including the above-mentioned filtering tool, have been used throughout the training of its current models powering ChatGPT.Footnote 97

- Therefore, with a view to reflecting an appropriate balancing of freedom of expression and privacy, the OPC finds the aspect of the complaint related to the collection, use and disclosure of personal information from publicly accessible websites and licensed datasets to be well-founded and conditionally resolved under PIPEDA.Footnote 98

- This conclusion is based on OpenAI’s representations and our understanding, as well as our expectation that OpenAI will continue to effectively implement and improve these mitigation measures and develop further innovative privacy-protective techniques in the future.

Issue 2: Did OpenAI obtain valid consent and meet its obligation to inform individuals with respect to its collection, use and disclosure of personal information?

- For the reasons outlined below, we find that OpenAI did not obtain valid consent for its collection, use and disclosure of personal information for the purpose of developing and deploying its GPT-3.5 and 4 models.

- The following section (Issue 2A) assesses whether OpenAI’s collection and use of personal information from publicly accessible websites or licensed third-party sources was compliant with the Acts’ consent provisions. We then consider the Respondent’s collection and use of personal information from users via their interactions with ChatGPT (Issue 2B).Footnote 99 Finally, we examine OpenAI’s disclosure of personal information from these various sources (Issue 2C).

Issue 2A: Did OpenAI obtain valid consent for the collection and use of personal information from publicly accessible websites and licensed third-party sources?

Analysis under PIPEDA, PIPA-BC and PIPA-AB

- We find that OpenAI did not have implied consentFootnote 100 for its collection and use of individuals’ personal information from publicly accessible websites and licensed third-party sources for the purpose of training its GPT-3.5 and 4 models.Footnote 101

- Pursuant to sections 5(1), 6.1 and 7, as well as Principle 4.3 of Schedule 1 of PIPEDA, sections 6-8 of PIPA-BC and sections 7-8 of PIPA-AB, the consent of individuals is required for the collection, use or disclosure of their personal information, unless an exception applies. The type of consent required will vary depending on the circumstances and the type of information involved.

- The Guidelines for obtaining meaningful consent (“the Consent Guidelines”) jointly issued by the OPC, OIPC-AB and OIPC-BC provide that “organizations must generally obtain express consent” when: (i) the information being collected, used or disclosed is sensitive; (ii) the collection, use or disclosure is outside of the reasonable expectations of the individual; and/or (iii) the collection, use or disclosure creates a meaningful residual risk of significant harm.Footnote 102

- OpenAI represented that it relies on individuals’ implied consent to collect and process the personal information found on publicly accessible websites and in licensed datasets that it uses to pre-train its models. OpenAI argued that the Acts may not be designed to address the complex challenges and nuances associated with innovative technologies and business models where there is no direct relationship between the parties involved. OpenAI submitted that the Offices should apply a contextual and balanced approach based on “flexibility, common sense, and pragmatism”, and it justified its reliance on implied consent based on the following factors:

- the pressing and substantial benefits of training AI models, which OpenAI states have already provided “dramatic benefits to humanity”;

- the necessity of this information for the processing, given that models must train on a large amount of text to develop an understanding of how language works;

- the impracticability of direct notification to individual persons given the impossibility of identifying and locating them based on the information found in the unstructured raw datasets;

- OpenAI’s reasonable efforts to be transparent about its information handling practices related to the development and training of its models, and the public notice that it provides through readily available means such as its Privacy Policy or Terms of Use;

- OpenAI’s use of de-identification measures and other risk mitigation measures, such as the unstructured nature of training datasets, the use of filters to exclude certain sites and content from training data, or the fact that personal information is not processed in a targeted manner to build profiles of, or gain knowledge about, specific individuals (mitigation measures that are discussed throughout this report); and

- OpenAI’s view that the balance of interests favours an opt-out form of consent. OpenAI further states that it continually analyzes and seeks to optimize the balance of risks and benefits involved in training and making the models available to the public, taking into account the various measures it has implemented to reduce the processing of personal information and mitigate potential risks.

- Consent is a core requirement of PIPEDA, PIPA-AB and PIPA-BC, limited only by carefully defined legislative exceptions expressly set out in the respective legislations. This has been confirmed in Supreme Court of Canada jurisprudenceFootnote 103, as well as in federalFootnote 104 and provincialFootnote 105 case law.

- We acknowledge that the development of new technologies, such as AI, may raise new challenges for organizations when it comes to complying with existing privacy laws – in particular, with respect to consent. However, we note that the Acts are technology-neutral, and we are required to assess OpenAI’s practices against the existing applicable legal frameworks. The authority to enact new laws or amend current laws, whether broad in scope or specifically dealing with generative AI or other emerging technologies, remains with Parliament and the legislatures.

- Consistent with the modern approach to statutory interpretation, the Offices have interpreted the Acts with “flexibility, common sense, and pragmatism”. As noted above, we have relied on this approach to assess OpenAI’s practices against the Acts, taking into consideration the various factors listed above at paragraph 122.

Sensitivity

- Information that will generally be considered sensitive, and thus require a higher degree of protection, includes health and financial data, ethnic and racial origins, political opinions, genetic and biometric data, an individual’s sex life or sexual orientation, religious or philosophical beliefs, and young people’s personal information.Footnote 106

- While the exact size of the datasets that OpenAI collects directly from publicly accessible sources and indirectly from licensed third-party sources has not been publicly disclosed nor confirmed to the Offices during the course of the investigation, OpenAI represented that it includes trillions of words. Indeed, the Common Crawl database alone – one of the sources which OpenAI relies on to build its datasets – contains petabytes (i.e., millions of gigabytes) of data regularly collected since 2008 (i.e., over 250 billion pages spanning 17 years, with 3–5 billion new pages added each month).Footnote 107

- OpenAI maintained that, as part of its mitigation measures aimed at reducing the presence of personal information in the final pretraining datasets used to train GPT-3.5 and 4, it removed certain categories of websites from the raw data collected from publicly accessible websites (i.e., log-in gated websites, websites with pirated or harmful content, adult websites, and for GPT-4 specifically, websites that aggregate personal information about individualsFootnote 108) and “deduplicated” (i.e., removes redundant) content.

- Regarding data licensed from third parties, OpenAI submitted that it selected datasets that do not contain extensive personal information, while recognizing that they may contain personal information incidentally (e.g., a licensed encyclopedia may contain an entry concerning a public figure who is still alive).

- In any event, OpenAI stated that the amount of data alone is not determinative of the sensitivity of the information, particularly given the non-access-gated, publicly accessible data and the inherently non-intrusive nature and purpose of the processing. OpenAI also represented that potential privacy risks are further mitigated by the unstructured nature of the pretraining datasets, which are not indexed or organized by reference to individuals, and the fact that information is tokenized (see paragraph 85).

- OpenAI did not grant our request to access and review their systems and, consequently, we were unable to directly assess the effectiveness of these mitigation measures. As mentioned above, the categories of websites that OpenAI removed from the GPT-3.5 and 4 pretraining datasets were very limited. In particular, OpenAI confirmed that it did not screen out social media websites, websites aimed at children, or websites that may contain information regarding other vulnerable groups whose information is more likely to be considered sensitive.

- OpenAI’s pretraining datasets primarily consisted of data that was publicly accessible on the Internet, such as forum posts, product reviews, user comments, social media, essays, articles or books. A New York Times article reported that OpenAI, and other AI companies, also transcribed one million hours of YouTube videos to harvest text for their AI models.Footnote 109

- In that context, and given the absence of specific mitigation measures aimed at detecting and masking private identifying information in the GPT-3.5 and 4 pretraining datasets, we find that these datasets necessarily included sensitive information such as financial or medical information, information about religious or political beliefs, opinions about sensitive or controversial topics, and information relating to children; some of which will have been posted by third parties (i.e., not the individual themselves).

- While licensed datasets represented a much smaller subset of OpenAI’s pretraining data, we find that they might also have included sensitive personal information. For example, news articles about an individual’s past criminal offences, including mere suspected offences, may reveal sensitive information such as their ethnic origin or health information.Footnote 110

- We find that OpenAI could not rely on implied consent for the collection of such sensitive information for the purpose of training its GPT-3.5 and 4 models.

Reasonable expectations