2015-2016 Annual Report to Parliament on the Personal Information Protection and Electronic Documents Act and the Privacy Act

Time to Modernize 20th Century Tools

Office of the Privacy Commissioner of Canada

30 Victoria Street – 1st Floor

Gatineau, QC

K1A 1H3

(819) 994-5444, 1-800-282-1376

© Minister of Public Services and Procurement Canada 2016

Cat. No. IP51-1E-PDF

1913-3367

Follow us on Twitter: @PrivacyPrivee

September 2016

The Honourable George Furey, Senator

The Speaker

The Senate of Canada

Ottawa, Ontario K1A 0A4

Dear Mr. Speaker:

I have the honour to submit to Parliament the Annual Report of the Office of the Privacy Commissioner of Canada on the Personal Information Protection and Electronic Documents Act for the period from January 1, 2015 to March 31, 2016 and the Privacy Act for the period from April 1, 2015 to March 31, 2016.

Sincerely,(Original signed by)

Daniel Therrien

Privacy Commissioner of Canada

September 2016

The Honourable Geoff Regan, P.C., M.P.

The Speaker

The House of Commons

Ottawa, Ontario K1A 0A6

Dear Mr. Speaker:

I have the honour to submit to Parliament the Annual Report of the Office of the Privacy Commissioner of Canada on the Personal Information Protection and Electronic Documents Act for the period from January 1, 2015 to March 31, 2016 and the Privacy Act for the period from April 1, 2015 to March 31, 2016.

Sincerely,(Original signed by)

Daniel Therrien

Privacy Commissioner of Canada

Commissioner’s Message

Privacy in 2016: Time to modernize 20th century tools

It is my pleasure to present my Office’s 2015-2016 Annual Report to Parliament. Beginning this year, our reports on the Privacy Act—which applies to the federal public sector—and the Personal Information Protection and Electronic Documents Act (PIPEDA)—which applies to private sector organizations—are combined. An amendment to PIPEDA in June 2015 aligned its reporting period with that of the Privacy Act, enabling us to prepare one annual report, as opposed to having two reports tabled at different points of the year.

A key theme of this report is the constant and accelerating pace of technological change and its profound impact on privacy protection. In both the public and private sectors, it’s clear that we need to update the tools available to protect Canadians’ personal information. Not doing so, in my view, risks eroding the trust and confidence citizens have in federal institutions and in the digital economy.

New technologies enable businesses and governments to collect and analyze exponentially greater quantities of information using complex computer algorithms, leading to advances in areas ranging from the tailored treatment of diseases to the optimization of traffic flows.

At the same time, they have created the potential for information to be used in possibly questionable ways. In the private sector, businesses can track and analyze customer behaviour like never before, opening the door to invasive marketing and differentiated services based on inferred characteristics. And in the public sector, federal departments and agencies involved in national security now have increased powers to share information about any or all Canadians’ interactions with government—and potentially, with the assistance of Big Data analytics, to profile ordinary Canadians with a view to identifying security threats among them.

Keeping up with all these changes to succeed in our mission to protect and promote the privacy rights of individuals has been a challenge—especially when operating under privacy legislation that predates many of these technological innovations. There was no Internet when the Privacy Act was proclaimed in 1983. Facebook had yet to be imagined when PIPEDA came into force in 2001.

We are left with 20th century tools to deal with 21st century problems. And in the meantime, 90 percent of Canadians feel they are losing control of their personal information and expect to be better protected. That Canadians would feel uninformed and not able to control their personal information given the speed and breadth of technological change and the resulting impact on their privacy rights is hardly surprising. This suggests greater action is needed from regulators, legislators, the courts, business leaders and policymakers to protect citizens.

This is the backdrop against which we present this annual report on the activities undertaken by my Office in carrying out our mission over the period covered—including investigations of complaints; advice to Parliament; Privacy Impact Assessments (PIAs) and audits; public education; conducting and supporting research on key issues; international and federal-provincial-territorial cooperation; and court action.

This report details some of the key work we have done and will continue pursuing to modernize Canada’s legislative, legal and regulatory frameworks to protect privacy in the face of challenges brought forth by new technological realities.

Privacy Act reform

Ongoing technological evolution has had a significant impact on privacy. Keeping up with all these changes has been a struggle, especially when operating under privacy legislation that predates many impactful technological innovations.

The first chapter highlights our work over the past year to pursue reform of the Privacy Act. After more than three decades, this law is out of step with today’s existing and emerging privacy risks. In March 2016, I appeared before Parliament and shared recommendations for amending the Act in three broad categories:

- Technological change;

- Enhancing transparency; and

- Legislative modernization.

The Privacy Act came into force in a time when information was collected and shared in paper form and federal offices were filled with filing cabinets-decades before email, mobile devices and social media. Today, vast amounts of personal information can be collected effortlessly and lost far more easily. In recent years, we have seen massive government breaches affecting tens, even hundreds of thousands of citizens. Among our recommendations, we call for an explicit requirement for federal institutions to safeguard personal information under their control-and to report material data breaches to my Office, both mandatory obligations that private sector organizations already have or will soon face.

Citizens today have grown to expect greater and clearer details on the use of their personal information by organizations, and rightfully so. People increasingly want to know what departments do with their information, with whom they share it, and why. In its current form, the Privacy Act does little to help Canadians find answers and it’s why we have recommended strengthening transparency reporting requirements for government institutions and limiting exemptions to access to personal information requests under the Act.

Among our recommendations, we also ask that departments be legally required to carry out PIAs and to consult our Office before tabling legislation with potential privacy implications, so issues can be addressed early, before they affect individuals. And we recommend creating an explicit necessity requirement for personal information collected by a government program or activity to avoid the over-collection made possible by new technology.

My Office’s work to encourage the modernization of the federal public sector privacy law is detailed further in this report.

Strategic privacy priorities

Last year, my Office conducted a priority-setting exercise, following extensive consultations with stakeholders and the public. As a result, in May 2015, we announced four strategic privacy priorities that would help guide our work for the next five years:

- the economics of personal information;

- government surveillance;

- reputation and privacy; and

- the body as information.

This report provides important updates on our work in all these key and emerging areas.

Consent and the economics of personal information

In addition to the changes needed on the public sector front, it is clear that we also need to address new challenges on the private sector side as well – in particular, for example, the notion of consent, which has been a cornerstone of PIPEDA.

Personal information has become a highly valuable commodity, leading to the proliferation of new technologies and the emergence of new business models. In this increasingly complex market, many are questioning how Canadians can meaningfully exercise their right to consent to the collection, use and disclosure of their personal information.

The Internet of Things raises further questions about our ability as individuals to provide meaningful, informed consent. Everything—from cars to refrigerators—is being connected to the Internet. These machines are constantly collecting information about our habits, and organizations are finding ways to analyze and combine it with data collected by other devices in our homes and elsewhere. With so much that can be done with this information, organizations find it challenging to explain their intentions, which they may not yet fully know themselves, further compounding the complexities around obtaining meaningful consent.

We recently launched an examination and consultation on the foundational issue of consent in today’s digital world. We hope to identify potential enhancements to the current model and bring clearer definition to the roles and responsibilities of the various players — individuals, organizations, regulators and legislators — who could implement them. We will then apply those improvements within our jurisdiction and recommend other changes to Parliament as appropriate. Our discussion paper on the topic, and certain potential solutions, are described further in this report.

Government surveillance and Bill C-51

We know the risks to our security are real and complex. Canadians want to feel secure, but they do not want this goal to come at any and all cost to their privacy. They want a balanced and reasonable approach. Our goal in relation to this priority is to contribute to the adoption and implementation of laws and other measures that protect both national security and privacy.

In the past year, we contributed to the development of transparency reporting guidelines for telecommunication service providers by Innovation, Science and Economic Development Canada. Going forward, we continue to call for similar guidelines to be developed for federal institutions.

In the months leading up to the passage of Bill C-51, the Anti-Terrorism Act, 2015, I made a number of representations to Parliament detailing serious privacy concerns with certain provisions in the Bill, which was then unfortunately enacted without amendment.

Since the adoption of Bill, we have begun using our audit and review powers to examine how information sharing is occurring between federal institutions to ensure the implementation of the new provisions respects the Privacy Act. Outlined fully in chapter two, our first phase surveyed departments which reported using the legislation’s new information sharing powers to generate 58 disclosures and 52 receipts of personal information all with regard to individuals they said were suspected as posing threats to security.

Looking forward, our next phase will focus on reviewing and verifying the circumstances of this sharing. Our goal is to provide as clear a picture as we can of the use of SCISA and other laws, to inform the public and Parliamentary debate that will take place in the course of the review of Bill C-51 that was announced by the government. Our hope is that this review will result in the adoption of measures that will effectively protect privacy in relation to the collection and sharing of national security information.

Chapter two also includes our review and recommendations concerning the Communications Security Establishment’s (CSE) sharing of metadata with “Five Eyes” partners. After the CSE discovered that more information about Canadians was being shared than intended due to a reported technical failure, the Minister of Defence put the program on hold. Nevertheless, the CSE assessed the risk to privacy of this incident as low because the data being shared was metadata rather than the content of communications and Five Eyes partners are mutually committed to not spy on each other’s citizens. We questioned that assessment, given our research that shows metadata can indeed be very sensitive, and included among our recommendations the need to amend the National Defence Act to include specific legal safeguards to protect Canadians’ privacy.

Reputation and privacy

Canadians recognize the personal and professional benefits of participating in the online world, however they are increasingly concerned about their online reputation and we are seeing new privacy challenges in this area, both in the public and private sectors.

With this strategic privacy priority, my hope is that we can help create an environment where individuals may use the Internet to explore their interests and develop as persons without fear that their digital trace will lead to unfair treatment.

We launched a discussion paper in January 2016 and sought submissions on the privacy issues related to online reputation with a view to ultimately developing a concrete position on the means of addressing these issues, including the right-to-be-forgotten, and to help inform public and Parliamentary debate.

The body as information

The growing popularity of wearable technologies, such as fitness trackers; along with smart vests and other connected health-related products adds a new and even more personal dimension to the Internet of Things.

A global industry has arisen capitalizing on information about the body. While some promise real benefits for both individuals and the health care system as a whole, technologies used to extract information about and from our bodies carry the most sensitive personal information.

This area is developing quickly and it is not clear that appropriate privacy protections are always in place.

We want to raise awareness about the potential privacy risks associated with technology designed to read information from and about our bodies. And we want to conduct research and offer helpful guidance in this emerging area. In the short term, we have scanned current and emerging health applications and digital health technologies, such as fitness apps and heart rate monitors. We plan to test some of these products in our technology lab to better understand their privacy implications and inform consumers accordingly.

Year in review

The final chapter of this report details all the other important work undertaken by my Office to protect and promote privacy over the last reporting period.

New technologies and business models have led to privacy issues which were not necessarily envisioned at the time our current privacy laws were conceived or that challenge the relevance of existing frameworks. To add to this situation, my Office has been provided new responsibilities, further stretching our resources and challenging our ability to do the kind of proactive work we believe is necessary.

Certainly, establishing our strategic privacy priorities has helped us to ensure we focus on today’s current and emerging issues, and to be strategic about how we devote our resources.

However, breach reports to my Office are growing year over year, particularly since 2014 when government reporting of material breaches was deemed mandatory under Treasury Board policy. And Bill S-4, the Digital Privacy Act, will soon make reporting by private organizations a legal obligation.

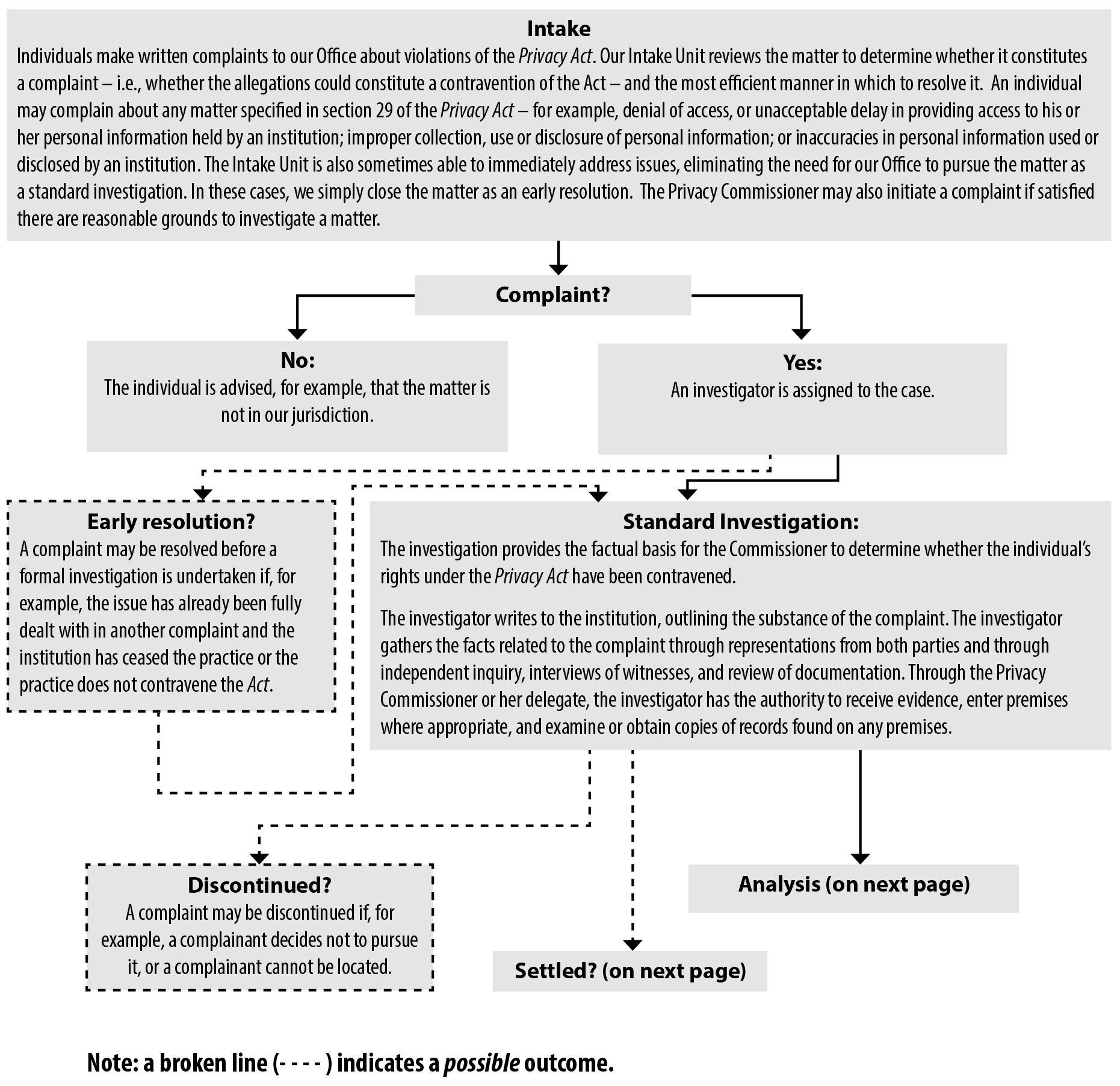

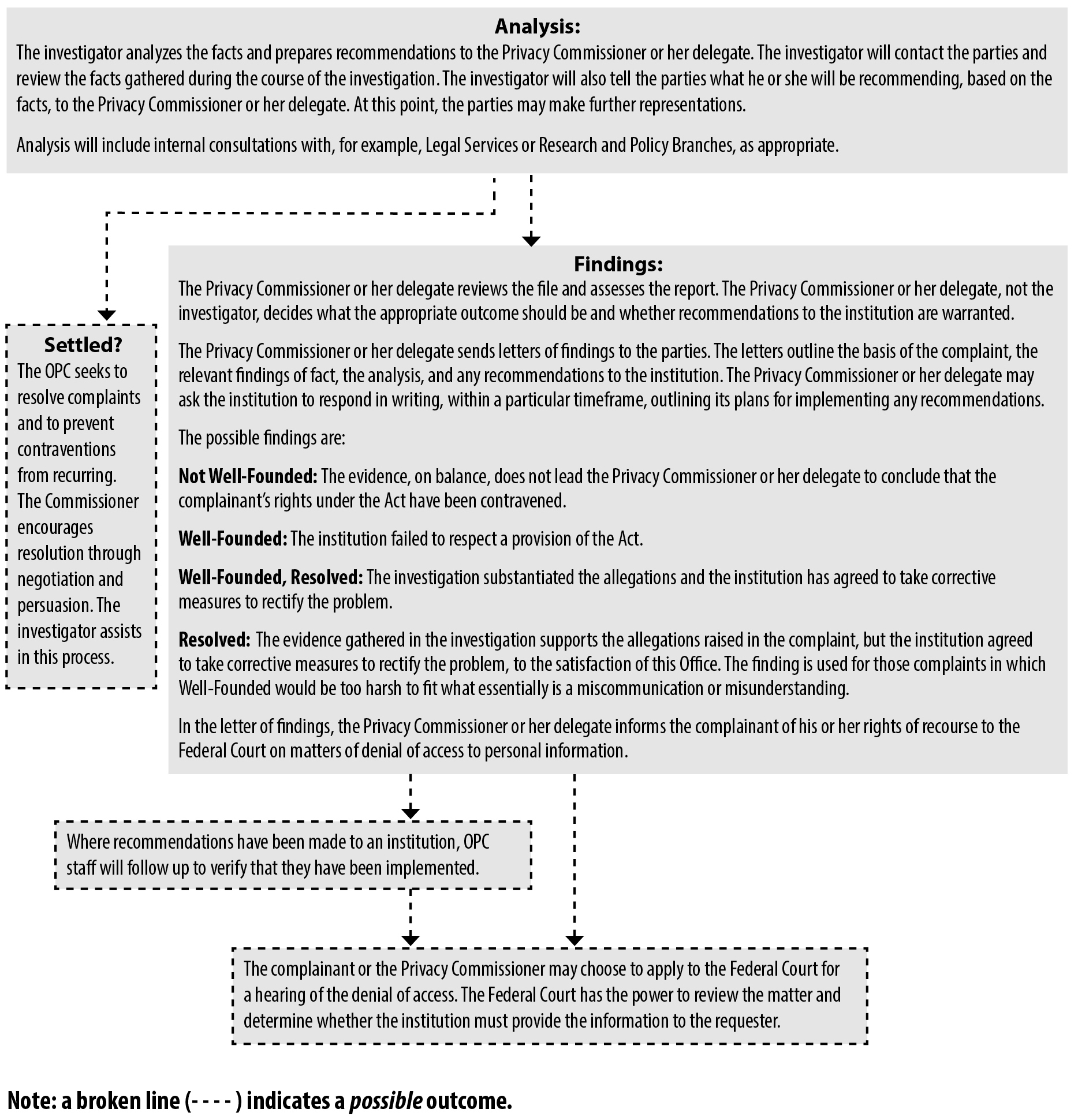

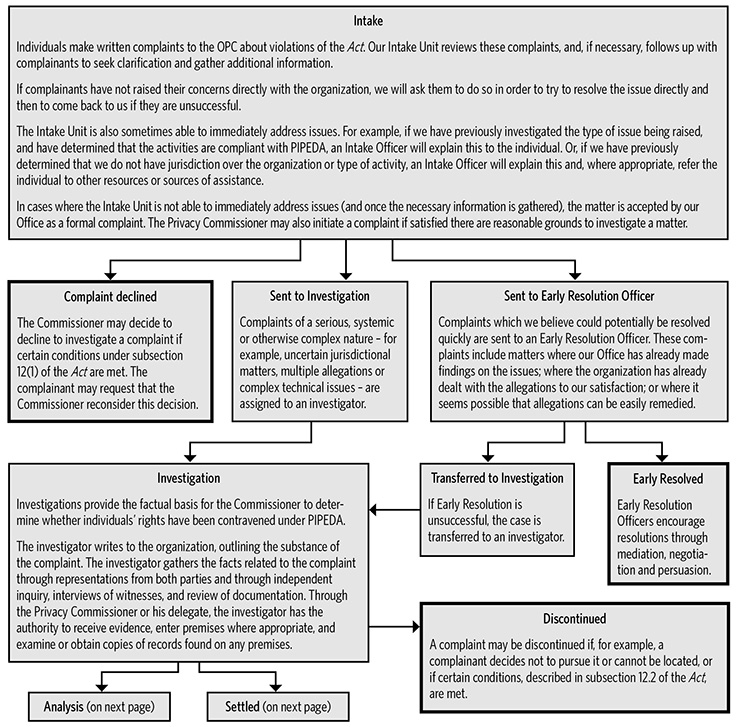

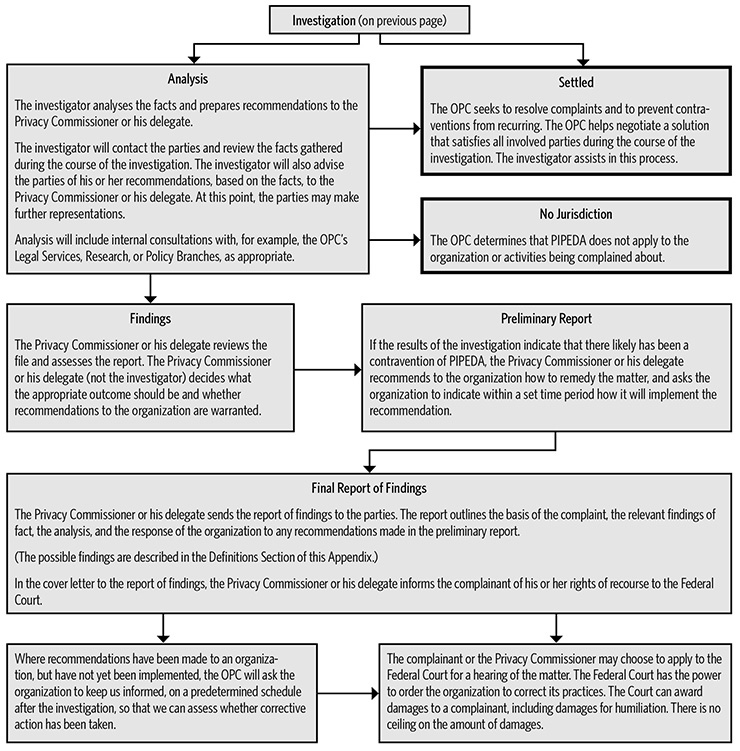

Despite these challenges, we work to ensure that the resources and tools we do have can make the greatest impact on Canadians. We make, for example, increased use of early resolution. Following a diagnostic review of our Privacy Act compliance activities, we are implementing a new risk management framework under which matters posing the greatest impact on privacy will receive higher investigative priority. We will also work more closely with federal institutions to support stronger compliance.

Meanwhile, we continue efforts to raise public awareness, to help organizations and individuals understand their rights and responsibilities. This past year, for example, we launched new multi-year outreach strategies to connect with youth, seniors and small businesses. We know that individuals and organizations overwhelmingly go online first for privacy information, be it about asserting rights or fulfilling their responsibilities. As a result, we have also been working to modernize our website to better meet Canadians’ needs.

Recognizing that privacy issues increasingly cross borders within and beyond Canada, my Office continues to work with provincial, territorial and international counterparts. In the past year, for example, we collaborated with our counterparts in Alberta and British Columbia to provide new guidance to organizations on Bring Your Own Device (BYOD) and an updated online security checklist.

On the international front, we coordinated the activities of 29 privacy authorities around the world engaged in the third annual Global Privacy Sweep which examined the privacy communications of companies marketing online to children. We also co-sponsored an international resolution which was unanimously adopted by data protection authorities around the world to encourage greater transparency around government institutions’ warrantless collection of organizations’ customer and employee personal information.

A final word

As the challenges presented by 21st century technologies mount and business models evolve, we face the reality that, despite our best efforts to find efficiencies and focus efforts, the tools we have to do our work to protect and promote privacy are increasingly insufficient.

Changes to legislation, legal frameworks, business and departmental practices, as well as individual awareness levels are required for Canada to once again emerge as a leader in privacy protection and ultimately for Canadians to have better control of their personal information.

Privacy by the numbers

| Information requests related to PIPEDA matters * | 4,747 |

|---|---|

| Information requests related to Privacy Act matters | 1,539 |

| Information requests related to neither Act | 3,810 |

| PIPEDA complaints accepted * | 381 |

| PIPEDA complaints closed through early resolution * | 230 |

| PIPEDA complaints closed through standard investigation * | 121 |

| PIPEDA data breach reports * | 115 |

| Privacy Act complaints accepted and processed for investigation | 1,389 |

| Privacy Act complaints accepted and placed in abeyance | 379 |

| Privacy Act complaints closed through early resolution | 460 |

| Privacy Act complaints closed through standard investigation | 766 |

| Privacy Act data breach reports | 298 |

| Privacy Impact Assessments (PIAs) received | 88 |

| PIAs reviewed as “high risk” | 39 |

| PIAs reviewed as “lower risk” | 35 |

| Public sector audits concluded | 1 |

| Public interest disclosures by federal organizations | 441 |

| Bills and legislation reviewed for privacy implication (private sector) * | 1 |

| Parliamentary committee appearances on private sector matters * | 2 |

| Formal briefs submitted on private sector matters * | 3 |

| Other interactions with parliamentarians or staff (for example, correspondence with MPs’ or Senators’ offices) on private sector matters * | 3 |

| Bills and legislation reviewed for privacy implication (public sector) | 7 |

| Parliamentary committee appearances on public sector matters | 6 |

| Formal briefs submitted on public sector matters | 2 |

| Other interactions with parliamentarians or staff on public sector matters | 3 |

| Speeches and presentations delivered | 116 |

| Visits to main web site | 1,819,835 |

| Blog visits | 318,136 |

| YouTube site visits | 11,647 |

| Tweets sent | 650 |

| Twitter followers as of March 31, 2016 | 10,869 |

| Publications distributed | 26,512 |

| News releases and announcements issued | 19 |

| * Indicates statistics collected from January 1, 2015 to March 31, 2016. All other displayed statistics were collected from April 1, 2015 through March 31, 2016. | |

Chapter 1: Privacy Act reform

Canadian society and its federal institutions have experienced profound technological advances since 1983 when the Privacy Act first came into force. In accelerating fashion, the explosive growth in information and communication technologies over the past three decades has made it much easier and cheaper for governments to collect and retain personal information about their citizens.

The Privacy Act has remained virtually unchanged, while second- and even third-generation privacy laws have since been adopted at the provincial level and internationally.

The importance of keeping pace with the privacy protections in other countries—our trading and security partners in particular—cannot be overlooked. Data protection laws in the European Union (E.U.), for example, forbid disclosing personal information from an E.U. member to entities in other countries unless (among other exceptions) those countries have been deemed to have “adequate” data and privacy protections.

It used to be that the examination of adequacy of protection provided by a foreign country looked squarely at its private sector privacy law. But given revelations over the last three years unearthing significant sharing between private sector organizations and government institutions in North America especially, such an examination going forward will take into consideration a country’s full privacy legal regime, including in relation to its national security activities and the right of recourse for foreign nationals. E.U. officials will pay attention to standards as they review Canadian laws on the question of adequacy.

Late in the last fiscal year, Parliament took an important first step toward reforming what were once world-leading information laws. Currently, the House of Commons Standing Committee on Access to Information, Privacy and Ethics is studying both the Access to Information Act and the Privacy Act. In March 2016, our Office appeared before the Committee and provided a submission with recommendations for changes to the latter. In contributing to the dialogue, our Office brings over 30 years of practical knowledge interpreting and applying our current law, experiencing first-hand all of its limitations.

Bringing the Privacy Act into the 21st century

Our submission on modernizing the Privacy Act included 16 recommendations covering three broad themes: responding to technological change; legislative modernization; and the need for transparency.

Technological change

Technological change has allowed government collection, storage and sharing of information to increase exponentially. Existing legal rules are simply not sufficient to regulate this kind of massive data sharing or assure personal information held by federal institutions is adequately protected from unauthorized disclosure. Given the often-passionate public debate over Bill C-51, the Anti-Terrorism Act, 2015 (see Chapter two), which enables even broader sharing of personal information among many federal departments and agencies, it is clear this subject is of interest to a great many Canadians.

Information sharing should be based on written agreements

In its current form, the Privacy Act allows federal institutions to share personal information under their control with other federal institutions, provincial governments, or foreign governments for a variety of reasons, including “for a use consistent with the purpose for which the information was collected.” In our experience, and given the current wording, organizations have historically argued for a very broad interpretation of “consistent use.”

We have recommended that the Privacy Act be amended to require all such information sharing to be subject to written agreements. Among other things, these would: describe the precise purpose for which the information is being shared; limit secondary use and onward transfer; and outline other measures to be prescribed by regulations, such as specific safeguards, retention periods and accountability measures. Above all, written agreements would provide Canadians with transparency in explaining how federal institutions use their personal information. We also recommended that our Office be given the authority to review, comment on and assess compliance with these agreements.

A legal requirement to safeguard personal information

Our Office has received hundreds of reports of data breaches from federal institutions, pointing to a lack of adequate safeguards. With advances in technology, government departments are collecting and using ever-greater amounts of personal information without necessarily having the adequate safeguards in place, increasing the risk and potential consequences of privacy breaches.

Over the years, we have seen massive government breaches affecting tens, even hundreds, of thousands of citizens. In 2012, for example, the department then known as Human Resources and Skills Development Canada (HRSDC) reported the loss of an external hard drive, holding the personal information of close to 600,000 people who’d participated in the Canada Student Loan program—names, dates of birth, social insurance numbers, addresses, phone numbers and financial information.

Surprisingly—given the amount of personal information individuals have no choice but to share with the federal government—the Privacy Act does not impose a specific legal obligation on departments to safeguard the personal information they hold—a universal data protection principle found in most privacy laws around the world including PIPEDA. We believe it should be included in the Privacy Act as well.

In some cases, significant privacy breaches have not even been reported to our Office. In 2013, Health Canada sent letters to more than 41,000 people across the country in windowed envelopes that showed not only the recipient’s name and address, but the fact the letter was from the department’s medical marijuana program. The department did not report this as a data breach. Several hundred people who received the letters felt differently, complaining to our Office that Health Canada had revealed sensitive personal information without their consent.

Today, Treasury Board of Canada Secretariat (TBS) policy requires federal institutions to report “material” data breaches to our Office. In 2015-2016, the second full fiscal year in which federal institutions faced this requirement, we received 298 reports, up from 256 the year before—and up from 109 in 2012-2013, the last fiscal year where reporting was voluntary. The time has come for this breach notification requirement to be elevated from the level of policy directive to that of law. Placing a specific legal obligation on federal institutions to report such privacy breaches to our Office would ensure we have a better picture of the current scope of the problem, and that we are consulted in the process of responding to the breach and mitigating its impact on individuals.

Such a change would avoid an emerging disconnect between Canada’s federal public and private sector privacy laws. Under recent amendments to PIPEDA, data breach reporting will soon be mandatory for private sector organizations. Mandatory privacy breach notification is a feature of many modern laws and was included as part of the revised Organization for Economic Development and Cooperation (OECD) Privacy Guidelines in 2013.

Legislative modernization

The shift from paper-based to digital format records has led to a dynamic of over-collection—the federal appetite for our information has grown in direct proportion to the ease with which that information can be collected, a trend we have seen in numerous programs. As a first step, to ensure we do not again have a badly out-of-date law in the future, we have recommended a requirement for ongoing Parliamentary review of the Privacy Act every five years.

Limit collection to what is necessary

The Privacy Act states that, “no personal information shall be collected by a government institution unless it relates directly to an operating program or activity of the institution.” We have interpreted this to mean that the collection of information must be necessary for the operating program or activity, an interpretation consistent with the TBS Directive on Privacy Practices and an important factor to be measured by the E.U. looking at the question of adequacy.

This interpretation is not consistently followed across the Government of Canada. In fact, in a recent court submission, the Attorney General of Canada explicitly rejected necessity as a standard for the collection of personal information under the Act, arguing instead for a broader interpretation of the term “unless it relates directly,” which would allow greater collection of personal information. This question is now before the Federal Court of Canada.

Furthermore, the Standard on Security Screening, which is set by TBS, has recently been amended to allow for much broader collection than had previously been the case. Our Office has obtained leave to intervene in a court challenge to the new standard launched by the Union of Correctional Officers of Canada. Our intervention will be as a neutral party, to help the court in its interpretation of this particular section of the Privacy Act. We also reviewed TBS’ privacy assessment of the new standard and are investigating a number of related complaints.

Considering privacy protection up front to prevent privacy risks

The TBS Directive on Privacy Impact Assessment (PIA) is meant to ensure privacy risks will be appropriately identified, assessed and mitigated before a new or substantially modified program or activity involving personal information is implemented. The Directive requires institutions to submit a copy to our Office for review and comment. We have found—and institutions have told us—that this process is invaluable in identifying and mitigating privacy risks prior to project implementation. However, application of this policy requirement does not have force of law. As a result, the practice, quality and timeliness of PIAs can be very uneven across institutions. Some institutions that handle significant amounts of sensitive personal information seldom submit PIAs to our Office.

Similarly, some institutions may decide not to do a PIA in circumstances where, in our view, one is clearly needed or they may only complete one very late in rolling-out a new program. For example, in the 2013 case of the Canada Border Service Agency (CBSA) High Integrity Personnel Security Screening Standard, our Office was not consulted prior to implementation and a PIA was received upon the program’s implementation. As a result, the new and more invasive screening measures began without our input and a related complaint under the Privacy Act followed. A legislative requirement to complete a PIA prior to implementation could have resulted in privacy risks being highlighted and mitigated early on.

Complaint investigations and court actions are time consuming and costly recourse mechanisms—which could be avoided if there was a legislative requirement to conduct PIAs on particularly risky programs before they are launched. Over the years, we continue to note how much more efficient and less expensive it is to identify and address privacy risks during the design of a program rather than having to modify one that is already up and running.

As a further step to identify privacy issues before they become privacy problems, we have recommended the Act also require government institutions to consult with our Office on draft legislation and regulations with privacy implications before they are tabled; a requirement already in effect in a number of jurisdictions in Canada and elsewhere.

And, further, providing our Office with an explicit mandate to conduct education and research under the Privacy Act—a mandate that has been used to very good effect under PIPEDA—would enable us to better advance the purposes of the Privacy Act.

Enhancing transparency

Currently, the Act’s confidentiality provisions do not permit us to make public our investigation finding outside of annual and occasional special reports to Parliament. While we recognize that these provisions are reasonable in most cases, there should be some allowance made for limited exceptions, on grounds of public interest, as in PIPEDA. The primary goal of this discretion should be to inform Parliamentary debate and public discussions in a timely way.

In the past, our Office’s ability to inform debate and discussion has been hampered by the existing confidentiality constraints in the Privacy Act. For example, in both the case of the CBSA’s involvement with a television show (discussed in chapter six) and departments collecting personal information from a First Nation’s advocate’s personal social network page (outlined in our 2012-2013 annual report), we were withheld from publicly sharing our findings until reporting to Parliament several months later.

In the further interest of transparency, we have also recommended that departments be required to report on their administration of the Privacy Act in a more comprehensible way. These departmental reports typically comprise an elaborate array of statistics on the number of personal information requests received and processed in a year—with little or no explanation what the figures mean. If these reports are to be meaningful and useful in terms of transparency, they need to be intelligible.

We see a particular need for greater transparency reporting in the context of law enforcement. We have called on federal organizations to be open about the number, frequency and type of lawful access requests they make to internet service providers and other private sector organizations entrusted with customer information. The public, Parliamentarians and the privacy community in Canada have been advocating for more openness on this front for several years.

Maximize individuals’ access to their personal information

Providing individuals with access to their personal information held by federal institutions is an important enabler of transparency and open government. We have recommended both extending rights of access to foreign nationals and maximizing disclosure, as appropriate, when individuals seek access to their own personal information.

This involves limiting the Act’s exemptions to access to personal information requests, ensuring such exemptions are generally injury-based and discretionary as appropriate, and severing protected information wherever possible.

Privacy Act should apply to all federal institutions

We believe, as a matter of principle, individuals should be able to access their personal information and challenge its accuracy regardless of where it is within government.

This would be consistent with one of the fundamental purposes for which Agents of Parliament were created—as a window into the activities of the executive branch of government.

Expand Commissioner’s authority to share information for enforcement

It is now truer than ever that personal information knows no borders, particularly in a world facing global security threats. Recent amendments to PIPEDA provided clear authority for our Office to share information with counterparts domestically and internationally to facilitate enforcement collaboration in the private sector. We have recommended providing the Office with a similar explicit ability to collaborate with other data protection authorities and review bodies both nationally and internationally on audits and investigations of shared concern in connection with Privacy Act issues.

In Conclusion

Canadians have come to expect more openness and transparency about how their personal information will be used by government, with whom it will be shared, and how it will be protected. Domestic and international privacy laws have moved the yardstick considerably since the Privacy Act came into force in 1983. The protections of the Act as it stands are proving to be increasingly out of touch with Canadians and their engagement with a digital world.

We believe the modernization of the Act would provide Canadians with the protections and privacy rights they expect and that reflect current technological realities, thinking and experience, in Canada and internationally.

We look forward to further discussions with Parliament on bringing Canada’s Privacy Act into the 21st century.

Chapter 2: C-51 and surveillance

Canada is not alone in seeking the most effective ways of protecting its citizens from threats to national security. Governments around the world are collecting and sharing more and more personal information with a view to detecting and preventing threats, and new technologies are enabling the collection and analysis of previously unimaginable amounts of data. In our democratic society, finding the appropriate balance between the need for security and privacy is critical. Federal institutions with security mandates need to be able to protect Canadians, but their work must be done in ways that are consistent with the rule of law.

The ever-broader authority and capacity of government agencies to collect and share Canadians’ personal information was raised time-and-again in our consultations with Canadians during our priority setting exercise.

Participants understood the value of surveillance in the protection of national security and crime prevention—but questioned how surveillance and risk profiling without their knowledge might infringe on basic rights and freedoms. The discussions also included calls for greater transparency.

The breadth of these types of concerns was underscored by the national debate following the introduction of Bill C-51 (the Anti-Terrorism Act, 2015) in January of 2015. This and other legislation granting government departments and agencies new and greater authority to collect and share information poses great challenges to our existing frameworks for protecting privacy.

We must consider whether privacy protections developed in the early 1980s are adequate in this new era. Under our Government Surveillance strategic privacy priority, our ultimate goal is to contribute to the adoption and implementation of laws and other measures that demonstrably protect both national security and privacy.

C-51: the Anti-Terrorism Act, 2015

Bill C-51 received Royal Assent in June 2015 as the Anti-Terrorism Act, 2015, and came into force in August 2015 The Act introduced the Security of Canada Information Sharing Act (SCISA), about which our Office expressed serious concerns in submissions to a number of Parliamentary committees studying the Bill, including the Senate Committee on National Defence and Security.

Since then, a new government has been elected. It has committed to consulting on changes to the law, and our Office would welcome an opportunity to share our views.

While our Office welcomed legislation to create a Parliamentary committee to oversee matters related to national security as a positive first step, we have also recommended expert or administrative independent review or oversight of institutions permitted to receive information for national security purposes.

While the question of oversight has, in part, been addressed, our concerns regarding thresholds remain. SCISA’s current standard dictates that certain federal government institutions may share information amongst themselves so long as it is “relevant” to the identification of national security threats. In our view, that threshold is inadequate and could expose the personal information of law-abiding Canadians. A more reasonable threshold would be to allow sharing where “necessary.”

In line with government surveillance as one of our strategic priorities, we set out a number of steps we would take in the short and medium term to reduce the privacy risks associated with SCISA. We also committed to examine and report on how national security legislation such as Bill C-51 is implemented to ensure compliance with the Privacy Act and inform the public debate.

We stated that we would report our findings to Parliamentarians and the public, and issue recommendations for potential improvements to policies or legislation, as warranted.

We are following through on this commitment, having recently completed a review of the first six months of SCISA—how the Act is being implemented and applied. We have identified a number of concerns, and offered recommendations.

Review of the First Six Months of the Security of Canada Information Sharing Act

- The Security of Canada Information Sharing Act (SCISA) came into force on August 1, 2015. The stated purpose of the Act is to encourage and facilitate information sharing between Government of Canada institutions in order to protect against “activities that undermine the security of Canada”. In introducing the SCISA, the government stated that effective, efficient and responsible sharing of information between the various institutions of government is increasingly essential to identify, understand and respond to threats to national security. Under the Act, information may be disclosed if it is relevant to the recipient institution’s mandate or responsibilities in respect of activities that undermine the security of Canada, including in respect of the detection, identification, analysis, prevention, investigation or disruption of such activities. Protecting the security of Canadians is important, and we recognize that greater information sharing may assist in the identification and suppression of security threats.

- The Act is broadly worded and leaves much discretion to federal entities to interpret and define “activities that undermine the security of Canada”, potentially resulting in an inconsistent approach in its application. Moreover, the scale of information sharing that could occur under this Act is unprecedented. While a preliminary review of the data suggests a limited use of SCISA during its first six months of implementation, the potential for sharing on a much larger scale combined with advances in technology allow for personal information to be analyzed algorithmically to spot trends, predict behaviour and potentially profile ordinary Canadians with a view to identifying security threats among them. Our intent in future reviews will be to examine whether law abiding citizens are indeed subject to these broad sharing powers, and if so, under what circumstances.

- There is currently some level of review or oversight of certain federal entities responsible for national security. However, 14 of the 17 entities authorized to receive information for national security purposes under the SCISA are not subject to dedicated independent review or oversight. We note that the government has announced its intention to create a new Parliamentary Committee with responsibility for national security-related issues.

- We initiated a review to inform stakeholders, including parliamentarians, on the extent of information sharing pursuant to the SCISA. A survey was issued to 128 Government of Canada institutions, specifically the 17 institutions which are authorized to both collect and disclose information under the SCISA and 111 federal institutions which may now disclose information to any of the 17 institutions. The survey covered the first six months that the SCISA was in force (August 1, 2015 to January 31, 2016).

- Our survey found that during the first six months that the SCISA was in force, five institutions reported having either collected or disclosed information pursuant to the Act. The Canada Border Services Agency, the Canadian Security Intelligence Service, Immigration, Refugees and Citizenship Canada, and the Royal Canadian Mounted Police reported that collectively they received (i.e. collected), information under the SCISA on 52 occasions. The survey also revealed that collectively, the Canada Border Services Agency, Immigration, Refugees and Citizenship Canada and Global Affairs Canada made a total of 58 disclosures under the SCISA during the same time period. All of the other 111 federal institutions surveyed reported that they had not disclosed information under the SCISA. We also made general enquiries about the nature of the sharing activities. The enquiries were made to obtain an indication of the potential risk to law abiding citizens. We asked whether information shared involved specific individuals as opposed to categories of individuals. As well, we wanted to know if the information shared included individuals not suspected of undermining the security of Canada at the time of disclosure. In responding to our survey, the entities reported that information shared under the SCISA was for named individuals suspected of undermining the security of Canada.

- There are legal authorities that existed before the SCISA that permit the collection and disclosure of information for national security purposes. Some of these authorities are also very broad, including the common law powers vested in the police and others and the crown prerogative of defence. The survey found that 13 of the 17 entities used pre-existing authorities for such sharing activities. We did not enquire about the breadth of information shared. However, nine entities confirmed that the information sharing involved specific individuals.

- Public Safety Canada (PS) is responsible for all matters relating to public safety and emergency management that have not been assigned to another institution of the Government of Canada. The department is also responsible for the coordination of the Public Safety Portfolio, including the Royal Canadian Mounted Police, the Canadian Security Intelligence Service and the Canada Border Services Agency. Although the Act does allow for the Governor in Council, on the recommendation of the Minister of Public Safety and Emergency Preparedness, to make regulations for implementing the SCISA—including regulations respecting disclosures, record keeping and retention requirements under the Act—it has not done so to date.

- To support the implementation of the SCISA, PS prepared the DeskBook—a guidance document for employees in federal government institutions—and the publically available Security of Canada Information Sharing Act: Public Framework. These documents were examined as part of our review. Although they generally advocate responsible information sharing, the documents lack specificity and detail on how this should be achieved by departments and agencies in a manner that also respects privacy. Specifically, we found that the DeskBook lacks:

- Guidance on the need for and core elements that should form part of information sharing agreements;

- Sufficient explanation and examples, including case scenarios, that establish the thresholds for sharing and using information pursuant to the SCISA;

- Guidance on the importance of preventing inadvertent disclosures of personal information during discussions between disclosing and receiving institutions;

- Explanation of the factors that would mitigate against disclosure;

- Guidance on the content of records that should be kept, including a description of the information shared and the rationale for disclosure; and

- Guidance for destroying or returning information that cannot be lawfully collected.

- The Treasury Board of Canada Secretariat (TBS) Directive on Privacy Impact Assessment (PIA) came into force in 2010. The Directive is designed to ensure privacy protection is a core consideration in the initial framing and subsequent administration of programs and activities involving personal information. This was partly in response to Canadians and parliamentarians who expressed concerns about the complex and sensitive privacy implications surrounding proactive anti-terrorism measures, the use of surveillance and privacy-intrusive technologies, the sharing of personal information across borders and the threats to privacy posed by security breaches.

- We looked at whether the PS DeskBook provided clear guidance with regard to the requirement to complete PIAs, both in terms of the collection and disclosure of information pursuant to the SCISA. We note that the DeskBook indicates that “PIAs should not require amendments, unless normal triggers for amending PIAs are present”. Although collection authorities may not change for institutions that receive information under the SCISA, it is clearly intended that more and different information may be received than was the case prior to the enactment of the Act. The disclosure of information for purposes other than that for which it was collected constitutes a substantial modification to a program or activity of the institution. According to the PIA Directive, such activities would trigger the need for a new or amended PIA. The PIA guidance provided in the PS DeskBook should align with the requirements and intent of the TBS directive.

- Of the 17 entities authorized to collect information under the SCISA, 12 had undertaken some form of analysis to determine whether Privacy Impact Assessments (PIA) for their respective information sharing processes were necessary. Of these, two of the entities indicated that PIAs were deemed necessary and were under development.

- As part of our survey, we asked institutions whether they developed policies and guidance documents to operationalize the Act. As reported above, five institutions collected and/or disclosed personal information pursuant to the SCISA during the review period. Of these, three had developed such documents. We examined them and found that they lacked specificity and detail to provide meaningful assistance to employees to help them determine whether SCISA thresholds have been met. This small sample underscores the importance of having clear government-wide guidance to operationalize the SCISA.

- Recommendation: Public Safety Canada should provide government institutions with sufficient guidance and direction to ensure that:

- Information-sharing agreements are put in place and contain core privacy protection provisions;

- The thresholds for using the SCISA—for collection and disclosure purposes—are understood;

- Discussions between disclosing and receiving institutions do not result in an inadvertent disclosure of personal information;

- Factors that would mitigate against disclosure are explained;

- Appropriate record keeping practices are in place;

- The privacy impacts of SCISA-related collection and disclosure activities are assessed; and

- Information that cannot be lawfully collected is immediately destroyed or returned to the originating institution.

Departmental response: Public Safety Canada agrees with the recommendation.

The Department has provided guidance to institutions on the Security of Canada Information Sharing Act (SCISA), and Public Safety Canada will continue to do so in the future. For example, Public Safety Canada will provide further guidance on appropriate record-keeping practices, on the threshold for disclosure, and on the need to immediately destroy or return to the originating institution information that cannot be lawfully collected by a recipient, and on the other issues identified in the recommendation.

The Department of Public Safety and Emergency Preparedness Act provides the Minister of Public Safety and Emergency Preparedness and by extension, the Department the authority to “coordinate, implement or promote policies, programs, or projects relating to public safety and emergency preparedness” and “facilitate the sharing of information, where authorized, to promote public safety objectives.” In keeping with this mandate, the guidance prepared by Public Safety Canada on the SCISA and its disclosure authority is provided to institutions to help them in understanding the Act. Each Deputy Head is accountable for ensuring the proper implementation of the SCISA in their respective institution.

- The next phase of our review will focus on verifying the details and nature of the personal information sharing activity pursuant to the SCISA, in part to confirm the information given to us by departments. It will also examine the exchange of personal information—for national security purposes—using legal authorities other than the SCISA. Our goal is to provide as clear a picture as we can of the use of SCISA and other authorities, to inform the public and parliamentary debate that will take place in the course of the review of Bill C-51 that was announced by the government. Our hope is that our work in this area will result in the adoption of measures to protect privacy effectively in relation to the collection and sharing of national security information.

The next phase of review activities will commence in fiscal year 2016-2017.

Security agency metadata sharing leads to review and recommendations by our Office

In January 2016, the Minister of National Defence announced that, until further notice, the Communications Security Establishment (CSE) would no longer share certain metadata with its international security partners. The announcement followed the release of the 2014-15 Annual Report of the Office of the CSE Commissioner (the CSE’s oversight authority), which reported that information revealing details about the communication activities of Canadians was, due to a filtering technique that became defective, not being properly minimized (for example, removed, altered, masked or otherwise rendered unidentifiable) before being shared with “Five Eyes” partners—the signals intelligence agencies of Australia, New Zealand, the United Kingdom and the United States.

As noted in the CSE Commissioner’s report, the CSE discovered in late 2013 that certain metadata was not being properly minimized. Although it was able to confirm that protections were in place in 2008, the CSE could not say for sure how long after that the problem arose, or how much metadata that was not minimized had been shared, before the 2013 discovery. It did however tell us that it shared large volumes of metadata with partners, some of which may have had a “Canadian privacy interest.”

Given the potential impact on Canadians’ privacy, our Office conducted a review of the circumstances that allowed this situation to arise. In April 2016, we shared our observations and recommendations with the CSE.

What is Metadata?

The classic definition is that it is “data about data.” It’s not the content of an email or telephone conversation, but all the other information about the communication. Our email metadata, for example, would reveal who we sent emails to; when we sent them; our email and IP addresses; the recipients’ email addresses; the email client login records with IP address; and the subject of the emails; and more. In the digital age, we generate metadata constantly, and when it is all combined and analyzed, it can reveal a great deal about who we are-not just our identity, but our habits and interests, the places we go and the people we associate with. To better understand and raise awareness of the potential impacts on privacy, our Office has conducted substantial research into metadata.

CSE’s assessment of the breach

The CSE contended that the risk to privacy was minimal, because:

- The metadata did not constitute sensitive private information as it did not include names, contextual details related to individuals or contents of communications;

- Further analysis of the metadata would be required in order to identify specific individuals; and

- Five Eyes partners have all made commitments to carry out their operations while respecting the privacy of one another’s citizens.

Need for greater assurance

We questioned the CSE’s contention that the risk was low for the following reasons:

- On the potential sensitivity of the data shared with partners, research by our Office and others, including a recent report from Stanford University, demonstrates that metadata can reveal very sensitive information about individuals’ activities, associates, interests and lives.

- On the issue of partner’s commitments not to spy on one another’s populations, we have no reason to doubt this pledge but at the same time, such assurances cannot be construed as absolute guarantees. In fact, CSE officials told us words to the effect that ’states may do what they must to protect their national interest and security.’

- CSE acknowledged that the amount of metadata improperly shared with Five Eyes partners was large.

Following our review, we recommended that, going forward, before it resumes sharing metadata, the CSE should conduct a full Privacy Impact Assessment (PIA) on the program in accordance with the Treasury Board of Canada Secretariat’s Directive on PIA. We also offered the expertise of our Office to assist in the process of clarifying the metadata Ministerial directive, and recommended that the National Defence Act be amended not only to clarify the CSE’s powers—as suggested by the Office of the CSE Commissioner—but that those powers be accompanied with specific legal safeguards to protect the privacy of Canadians.

Warrantless access and the ongoing need for greater transparency

The legal controversy around “warrantless access” refers to the practice of law enforcement agencies seeking information about individuals from their telecommunications and Internet service providers without first obtaining court authorization.

In R v. Spencer, the Supreme Court stated that a warrant is needed in all circumstances except where: 1) there are exigent circumstances, such as where the information is required to prevent imminent bodily harm; 2) there is a reasonable law authorizing access; or 3) the information being sought does not raise a reasonable expectation of privacy.

Since this June 2014 ruling, many telecommunications companies and Internet service providers have required warrants or production orders when police officers seek confidential subscriber data.

Some law enforcement officials have said it has made their jobs impossible, arguing such a legal requirement is untenable in an era where more and more criminal activity has migrated online, where anonymity is often the norm.

However, an IP address can reveal a great deal about an individual. Access to basic subscriber information linked with Internet activity can unlock details of a person’s interests based on websites visited, their organizational affiliations, where they have been and the online services for which they have registered.

Consequently, impartial oversight in the form of judicial authorization is critical before sensitive personal information may be turned over to the State. Courts are best placed to balance the interests of the police and of individuals. It is only in exceptional circumstances that warrantless access is and should be permitted.

Progress on transparency

Following the Supreme Court’s landmark decision in R. v. Spencer, some telecommunications and other service providers began issuing their own, voluntary reports on requests from government authorities for information about their customers and clients. While we found these reports to be helpful, companies provided different information in varying forms, making it difficult to draw an accurate picture of the number and types of requests that were coming from government authorities, and how companies were responding to them.

In June of 2015, following consultation with our Office and various other stakeholders, Innovation, Science and Economic Development issued new transparency reporting guidelines for private sector organizations into which we provided input. While reporting remains voluntary, the guidelines seek to achieve more uniform reports, and better inform Canadians about how often, and in what circumstances businesses provide customer information to law enforcement and security agencies.

Going forward, we hope companies follow the guidelines and that we begin to see more consistent reporting. For companies that have yet to produce such reports, we hope they will see the value of transparency and share relevant information with public. If not, we may resume our call for legislative changes in this area.

Need for public sector action

While a good first step, private sector reporting provides only part of the picture. Further transparency from the public sector is needed to shed light on how the use of powers to obtain personal information lines up with the associated privacy risks.

To match the momentum started within the private sector, we have asked federal institutions to issue their own transparency reports about requests they make to private sector organizations for customer information. This was part of our recommendations on Privacy Act reform, discussed in chapter one.

We have called on federal institutions to maintain accurate records and to report publicly on the nature, purpose and number of lawful access requests they make to telecommunications companies. Such an approach would give citizens and Parliament greater insight into how federal institutions are using their lawful access powers.

In conclusion

One point on which all voices in the debate around public safety and privacy would agree is that much has changed over the last two decades. National security threats are no longer beyond, but sometimes within, our borders and we recognize that the online environment poses new challenges for policing.

On the other hand, recent legislative changes have raised concerns about the possibility of intrusive monitoring and profiling of ordinary Canadians.

Canadians value security in the face of threats confronting the world today, but they also care deeply about their privacy. They want to ensure laws and procedures are in place that respect our values, and they want law enforcement and national security agencies to do their job lawfully.

When it comes to security and privacy, rather than wanting one over the other, Canadians rightly want both. Finding the right balance is absolutely critical because the repercussions can be so serious when that equilibrium shifts too far one way or the other.

In pursuit of a better balance, we have recommended, for example, changing SCISA’s information sharing threshold from “relevance” to “necessity;” that private and public sector institutions follow through on transparency reporting; and amending the National Defence Act to add legal safeguards for protecting personal information collected and used by the CSE.

Chapter 3: Consent and the economics of personal information

The fact that personal information has commercial value is well established. Over time, as marketing became more sophisticated, companies moved beyond collecting names and addresses and started asking for more and more of our personal information—before mailing in the little card to register a new product, for example, we might be asked to tick off boxes about our income, whether we owned or rented our home, and how we heard about the product we’d just purchased.

Today, even that kind of one-on-one information transaction, in which we knew who was asking, had a reasonable chance of understanding why they were asking the question—and could choose to check the boxes or not—is a thing of the past. As we search, surf and shop on the Internet, expand our social media profiles or add a new app to our smart phone, we are constantly providing personal information—about our interests, our habits and our location.

It has become increasingly difficult to know what personal information is being collected from us and by whom—let alone understand the 21st century business models fuelled by personal information and the automated processes that make them work.

It is ironic that, while the commercial potential of our personal information has increased dramatically, the investment required to obtain, store and analyze it is often minimal—as much as we are in the age of Big Data, we are also in the age of cheap data. Thanks to technological advances and the increased willingness on the part of people to put information about themselves online, it is very easy and inexpensive to collect astronomical amounts of personal information and use it for commercial ends—web-crawling software that spammers use to collect email addresses is just one example.

The right to consent

To protect our privacy in this increasingly digital environment, we rely on the Personal Information Protection and Electronic Documents Act (PIPEDA).

In many ways, consent is the cornerstone of this legislation. Organizations are required to obtain individuals’ consent to lawfully collect, use and disclose personal information in the course of commercial activity. Without consent, the circumstances under which organizations are allowed to process personal information are limited.

However, while it was written to be technology neutral, this legislation predates smart phones, cloud computing, Facebook, the Internet of Things and so many other information-gathering technologies that are now part of the everyday. It is no longer entirely clear who is processing our data and for what purposes.

Is it fair then to saddle consumers with the responsibility of having to make sense of these complex data flows in order to make an informed choice about whether or not to provide consent? Technology and business models have changed so significantly since PIPEDA was drafted that many now describe the consent model, as originally conceived in the context of individual business transactions, to be no longer up to the task.

Among the stakeholders we consulted during our priority setting exercise, there were numerous questions about the efficacy and suitability of the PIPEDA consent model in the context of Big Data and the myriad and opaque ways our information can be collected. Many held the view that individuals are much less able to exert control and provide meaningful consent based on privacy policies that are often hard to understand, excessively long yet incomplete and/or ineffective. Canadians who participated in focus groups held as part of our priority setting exercise told us much the same thing, expressing concerns about not having enough control over their online information. They felt uninformed about what their personal information was being used for and by whom and felt online privacy policies were generally incomprehensible.

Consent in the 21st century

In May 2016, our Office released a discussion paper on consent and privacy. In it, we consider the role of individuals, organizations, regulators and legislators and what might be expected of each of these parties in the future. We look at how other countries have dealt with the matter and outline a number of potential solutions.

For example, we propose measures that would enhance consent by giving individuals better access to information or the ability to manage preferences across different services.

Possible solutions that serve as alternatives to the consent model are predicated on the notion that information flows have become too complex for the average person and that the ultimate solution is a relaxing of requirements for consent in certain circumstances. For example, the European Union allows data processing without consent if it is necessary for legitimate purposes and does not intrude on the rights of individuals.

A possible solution for Canada may be to broaden the permissible grounds for processing under PIPEDA to include legitimate business interests, either as flexible concept or by defining specific legitimate interests in law. We might also consider legislating “no-go” zones which outright prohibit the collection, use or disclosure of personal information in certain circumstances.

Governance solutions focus on the role organizations play and could involve things like industry codes of practice, privacy trust-marks or the creation of consumer ethics boards to advise businesses on appropriate uses of data.

Such solutions, however, raise questions about the role of regulators and what authorities are required to effectively hold organizations to account. Order-making powers and fines, which our Office doesn’t currently have, are some examples of enforcement measures that could influence an organization’s practices and strengthen privacy protections for individuals. As well, currently our Office plays a more reactive role. We generally investigate complaints after a violation has occurred. Would it be reasonable to give our Office the authority to oversee compliance with privacy legislation more proactively, before problems arise? While many proposed solutions can be implemented within the current legal framework, others, such as the expansion of powers, may require legislative change.

Whether to legislate no-go zones, new legal grounds for processing where consent isn’t practicable or “privacy by design”—which would require companies to integrate privacy protections into new products and services—are among other possible solutions that could fall to law-makers.

We have since invited written feedback to our consent paper and in the fall will be speaking directly with stakeholders—businesses, advocacy groups, academics, educators, IT specialists and everyday Internet users. While it is unlikely that any one solution could serve as the proverbial “silver bullet,” we believe a combination of solutions may help individuals achieve greater privacy protection, which is our ultimate goal.

The Internet of Things

The Internet of Things (IoT)—the term used to describe the growing number of physical objects that collect data using sensors and share it over telecommunications networks—presents unique challenges to consent-based privacy protection frameworks.

IoT provides individual and societal benefits through increased automation and monitoring of all aspects of the environment, potentially leading to better management of resources, increased efficiencies and added convenience. IoT applications can be used to lower home energy costs by running appliances when electricity is cheaper or managing traffic flow by monitoring the number of vehicles through road-embedded sensors.

As discussed in our February 2016 IoT research paper, the collection of IoT information is motivated by a desire to understand individuals’ activities, movements and preferences, and inferences can be drawn about individuals from this information. For organizations, the value lies not in the revenue from selling devices but in the data that is generated and processed through big data algorithms.

Much of this data may be sensitive, or be rendered sensitive by combining data from different sources. For example, combining data generated by an individual carrying a smart phone, wearing a fitness tracker, and living in a home with a smart meter can yield a profile that can include physical location, associates, likes and interests, heart rate, and likely activity at any given time. If combined with other data collected in different ways—our Internet activity, for example—the information becomes more sensitive and more valuable.

Data collection by IoT devices is often invisible to individuals. There is no interface between consumers and organizations where data is exchanged in a visible and transparent way. Instead, data collection and sharing occurs device to device, without human involvement, as a result of routine activities. This makes it increasingly challenging to relay meaningful information about privacy risks in order to inform the user’s decision about whether or not to provide consent.

Following-up on Bell’s Relevant Advertising Program

In October 2013, our Office received an unprecedented number of complaints following the introduction of Bell’s “Relevant Advertising Program.” This program involved tracking of customers’ Internet browsing, app usage, telephone calling and television viewing activity-information Bell combined with demographic data from customer accounts to create detailed profiles to help third-party advertisers deliver targeted ads to Bell subscribers, for a fee.

Bell put the onus on customers unwilling to participate in the program to take steps to opt out. We concluded that customers should instead be asked to opt in-in other words, expressly choose to consent.

Following our investigation, Bell said it was cancelling the program and deleting all existing customer profiles related to the initiative. It later advised that it planned to launch a similar program using opt-in consent and asked for our views on the revised initiative.

Given the unprecedented number of complaints about the previous program and the potential privacy impacts of this type of targeted advertising on millions of individuals, we felt it was in the interest of Canadians to review and provide comments to Bell on the revamped program, and to that end, our Office had a number of discussions with the company.

While we are not in a position to say whether the new program meets the obligations set out under PIPEDA, we believe the new program is an improvement upon the one we investigated previously in that Bell is asking its customers whether they wish to participate on an opt-in basis. As we have previously stated, we see online behavioural advertising (OBA) as a legitimate activity if done correctly with the proper consent. We have provided suggestions for organizations in our Guidelines on online behavioural advertising.

Key investigation findings involving consent issues

Posting an email address online isn’t providing consent to be spammed

Following the launch of the Canadian Radio-television and Telecommunications Commission (CRTC) Spam Reporting Centre, we noted hundreds of submissions from the public about the e-mail marketing activities of Compu-Finder, a Quebec-based corporate training provider. This led to our first-ever investigation under the address harvesting provisions of PIPEDA introduced by Canada’s anti-spam law (CASL).

During our investigation, the company reported that as of January 2014, it had some 475,000 e-mail addresses on file, about 170,000 of which it had collected using address harvesting software. While the company claimed it ceased collecting e-mail addresses using such software prior to CASL’s July 2014 coming into force, we found it clearly continued to use some of these addresses for marketing purposes afterwards.

Compu-Finder said it collected addresses from websites of companies which it believed would be interested in its training and which, under Quebec law, had an obligation to provide such training. We found, however, that while its training sessions were offered almost exclusively in French at facilities in Montreal and Quebec City, the company was continually sending emails to recipients across Canada and even overseas.

Compu-Finder told us it thought email addresses posted on websites could be collected without consent under the “publicly available” exception in PIPEDA. In our view, this exception did not apply. Compu-Finder was using the addresses to sell services not always directly related to the purposes for which organizations had posted individuals’ e-mail addresses on their websites—such as a computer science professor who received an email promoting a course for finance directors.

We also found that some of the sites from which the company collected addresses had clear statements that email addresses on the site were not to be used for solicitation. In any event, the publicly available exception in PIPEDA cannot be claimed if an address was collected with address-harvesting software.

It was clear Compu-Finder was not aware of or did not respect its privacy obligations under PIPEDA. The company eventually agreed to implement all of our recommendations and enter into a compliance agreement marking our first use of this new tool made possible by changes to PIPEDA introduced by the Digital Privacy Act, which gained Royal Assent in June 2015.

Companies should read and understand PIPEDA’s regulations carefully before determining if information is really “publicly available.” In April 2015, we also posted a tip sheet and guide that describe best practices in email marketing and how to comply with the new address-harvesting provisions in PIPEDA.

Customer gets signed-up for credit card without consent

More and more often, we are asked to consent to the collection and use of our personal information by clicking an icon on a computer screen, a practice that creates risk. In this case, for example, the complainant told us that, while shopping at a retail store, he was approached by a salesperson and asked to join a loyalty program. The complainant stated that he agreed to join the loyalty program, but was surprised to receive a credit card from the retailer in the mail a few weeks later.

The complainant maintains he was never informed that he was applying for a credit card and, in fact, said he asked the salesperson directly if the application had anything to do with a credit card and was told it did not. In our investigation, we found much of the information on the credit application submitted in his name—including his phone number, occupation, annual income and monthly rent—was, in fact, inaccurate.

The retailer stated that the complainant knowingly provided his personal information for the purpose of obtaining a credit card, and provided his express consent for a credit check by checking a box on the tablet computer the salesperson was using to record the information. However, the retailer was unable to prove that the complainant: ever saw the tablet screen; provided all the information included in the application; understood that it would be used to collect his credit information; or that he (and not the retailer’s representative) actually clicked the requisite consent box.